Doing Data Science Better with Wolfram and the Multiparadigm Approach

Just as Wolfram was doing AI before it was cool, so have we been doing data science since before it was mainstream. A prime example is the creation of Wolfram|Alpha—a massive project that involved engineering, modeling, analyzing, visualizing and interfacing with terabytes of data, developing a natural language interface, and deploying results in a sensible way. Wolfram|Alpha itself is a tool for doing data science, and its continued success is largely because of the underlying strategy we used to build it: a multiparadigm approach driven by natural curiosity, exploring all kinds of data, using advanced methods from a range of areas and automating as much as possible.

Any approach to data science can only be as effective as the computational tools driving it; luckily for us, we had the Wolfram Language at our disposal. Leveraging its universal symbolic representation, high-level automation and human readability—as well as its broad range of built-in computation, knowledge and interfaces—streamlined our process to help bring Wolfram|Alpha to fruition. In this post, I’ll discuss some key tenets of the multiparadigm approach, then demonstrate how they combine with the computational intelligence of the Wolfram Language to make the ideal workflow for not only discovering and presenting insights from your data, but also for creating scalable, reusable applications that optimize your data science processes.

Lead with Questions, Not Techniques

Given all the buzzwords floating around over the past few years—automated machine learning, edge AI, adversarial neural networks, natural language processing—you might be tempted to grab a sleek new method from arXiv and call it a solution. And while this can be a convenient way to solve a specific problem at hand, it also tends to limit the range of questions you can answer. One main goal of the multiparadigm approach is to remove those kinds of constraints from your workflow, instead letting questions and curiosity drive your analysis.

Leading with questions is easiest when you start from a high level. Wolfram Language functions use built-in parameter selection, which lets you focus on the overarching task rather than the technical details. Your workflow might require data from any number of sources and formats; Import automatically detects the file type and structure of your data:

Engage with the code in this post by downloading the Wolfram Notebook

Engage with the code in this post by downloading the Wolfram Notebook

✕

Import["surveydata.csv"]// Shallow |

SemanticImport goes even further, interpreting expressions in each field and displaying everything in an easy-to-view Dataset:

✕

data = SemanticImport["surveydata.csv"] |

Apply FindDistribution to your data, and it auto-selects a fitting method to give you an approximate distribution:

|

✕

dist = FindDistribution[ages = Normal@data[All, "Age"]] |

Another quick line of code generates a SmoothHistogram plot comparing the actual data to the computed distribution:

✕

SmoothHistogram[{ages, RandomVariate[dist, Length[ages]]},

PlotLegends -> {"Data", "Computed"}]

|

You can then ask yourself, “What is going on in that graph?”, drill down with more computation and get more info about the fit:

✕

DistributionFitTest[ages, dist, "TestDataTable"] |

Go deeper by trying specific distributions:

✕

Column[Table[

DistributionFitTest[ages,

FindDistribution[ages,TargetFunctions->{d}],"TestDataTable"],{d,{NormalDistribution,PascalDistribution,NegativeBinomialDistribution}}]]

|

The simple input-output flow of a Wolfram Notebook helps move the process forward, with each step building on the previous computation. Every evaluation gives clear output that can be used immediately in further analysis, letting you code as fast as you think (or, at least, as fast as you type).

Using a question-answer workflow with human-readable functions and interactive coding gives you unprecedented freedom for computational exploration.

Work with All Kinds of Data

Although it’s easy to think of data science as a numbers game, the best insights often come from exploring images, audio, text, graphs and other data. In most systems, this involves either tracking down and switching between frameworks to support different data types or writing custom code to convert everything to the appropriate type and structure.

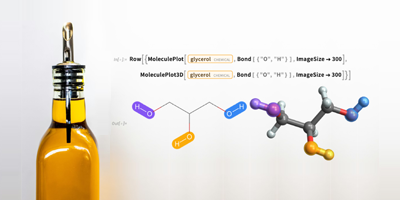

But again, underlying technical details shouldn’t be the focus of your data science workflow. To simplify the process, the Wolfram Data Framework (WDF) expresses everything the same way: tables and matrices, text, images, graphics, graphs and networks are all represented as symbols:

This reduces the time and effort for reading standard data types, sorting through unstructured data or handling mixed data types. Wolfram Language functions generally work on a variety of data types, such as LearnDistribution (big brother to FindDistribution), which can generate a probability distribution for a set of images:

|

✕

images = ResourceData["CIFAR-100"][[All, 1]]; |

✕

ld = LearnDistribution[images] |

The distribution can be used to examine the likelihood of a given image being from the set:

✕

Grid[Table[{i, RarerProbability[ld, i]} , {i, CloudGet["https://wolfr.am/DLdsWtJA"]}]]

|

You can even generate new representative samples:

✕

RandomVariate[ld, 10] |

WDF also makes it easy to construct high-level entities with uniformly structured data. This is especially useful for representing complex real-world concepts:

✕

us = Entity["Country", "UnitedStates"]; RandomSample[us["Properties"], 5] |

You can use high-level natural language input for immediate access to entities and their properties:

✕

states = EntityList[\!\(\*NamespaceBox["LinguisticAssistant",

DynamicModuleBox[{Typeset`query$$ = "all us states",

Typeset`boxes$$ =

TemplateBox[{"\"all US states with District of Columbia\"",

RowBox[{"EntityClass", "[",

RowBox[{"\"AdministrativeDivision\"", ",",

"\"AllUSStatesPlusDC\""}], "]"}],

"\"EntityClass[\\\"AdministrativeDivision\\\", \

\\\"AllUSStatesPlusDC\\\"]\"", "\"administrative divisions\""},

"EntityClass"],

Typeset`allassumptions$$ = {{"type" -> "SubCategory",

"word" -> "all us states",

"template" -> "Assuming ${desc1}. Use ${desc2} instead",

"count" -> "2",

"Values" -> {{"name" -> "AllUSStatesPlusDC",

"desc" -> "all US states with District of Columbia",

"input" ->

"*DPClash.USStateEC.all+us+states-_*AllUSStatesPlusDC-\

"}, {"name" -> "USStatesAllStates", "desc" -> "all US states",

"input" ->

"*DPClash.USStateEC.all+us+states-_*USStatesAllStates-\

"}}}}, Typeset`assumptions$$ = {}, Typeset`open$$ = {1, 2},

Typeset`querystate$$ = {"Online" -> True, "Allowed" -> True,

"mparse.jsp" -> 0.560174`6.199867941042123,

"Messages" -> {}}},

DynamicBox[

ToBoxes[AlphaIntegration`LinguisticAssistantBoxes["", 4,

Automatic, Dynamic[Typeset`query$$],

Dynamic[Typeset`boxes$$], Dynamic[Typeset`allassumptions$$],

Dynamic[Typeset`assumptions$$], Dynamic[Typeset`open$$],

Dynamic[Typeset`querystate$$]], StandardForm],

ImageSizeCache -> {439., {7., 15.}},

TrackedSymbols :> {Typeset`query$$, Typeset`boxes$$,

Typeset`allassumptions$$, Typeset`assumptions$$,

Typeset`open$$, Typeset`querystate$$}],

DynamicModuleValues :> {},

UndoTrackedVariables :> {Typeset`open$$}],

BaseStyle -> {"Deploy"}, DeleteWithContents -> True,

Editable -> False, SelectWithContents -> True]\)]

|

Entities and associated data values can then be used and combined in computations:

✕

GeoRegionValuePlot[ states -> EntityProperty["AdministrativeDivision", "Population"], GeoRange -> us] |

You can build entire computational workflows based on this curated knowledge. Custom entities made with EntityStore have the same flexibility. With data unified through WDF, you won’t have to worry about the size, type or structure of data—leaving more time for finding answers.

Broaden Your Computational Scope

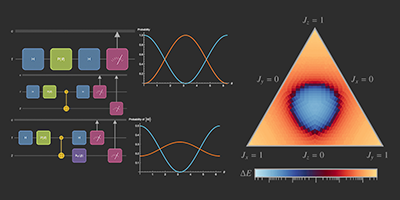

Discovery comes from trying new ideas, so a truly discovery-focused data science workflow should go beyond standard areas like statistics and machine learning. You can find more by testing and combining different computational techniques on your data—borrowing from geocomputation, time series analysis, signal processing, network analysis and engineering. To do that, you need algorithms for every subject and discipline, as well as the ability to change techniques without a major code rewrite.

Fortunately, Wolfram Language code uses the same symbolic structure as its data, ensuring maximum compatibility with no preprocessing required. Computational methods (e.g. model selection, data resampling, plot styles) are also standardized and automated across a range of functionality. Syntax, logics and conventions are uniform no matter what domain you’re exploring:

For a case in point, look at our data exploration of sensors from the ThrustSSC supersonic car. Standard data partitioning and time series techniques were useful in translating and understanding the data. But we also opted to try a few unconventional approaches, such as using CommunityGraphPlot to group together similar datasets:

A dive into signal processing—specifically, wavelet analysis—led to the discovery of certain discontinuities in the vibrational frequency of a wheel:

As it turned out, these gaps represented the wheel’s top edge crossing the sound barrier—a phenomenon that was understood by the engineers but had not been verified by previous analyses. Even a quick exploration using a broad, high-level toolset can provide better insights with less expertise (and a lot less code) than more siloed approaches.

Clearly Communicate Insights for All Audiences

Data science doesn’t stop with the discovery of answers; you need to interpret and share your results before anyone can act on them. That means presenting the right information to the right people in the right way, whether it’s sending a few static visualizations, deploying an interactive desktop or web app, generating an automated report or making a full write-up of your project. One major goal of the multiparadigm approach is to express insights in the clearest way possible, regardless of context.

For the basic cases, the Wolfram Language has visualizations for a range of analyses, with high-level plot themes and the ability to add labels, frames and other details all inline:

✕

cities = EntityClass[

"City", {EntityProperty["City", "Country"] ->

Entity["Country", "UnitedStates"],

EntityProperty["City", "Population"] -> TakeLargest[10]}][

"Entities"];

|

✕

BubbleChart[

EntityValue[cities, {"GiniIndex", "Area", "PerCapitaIncome"}],

PlotTheme -> "Marketing", ColorFunction -> "TemperatureMap",

ChartLabels -> Callout[

EntityValue[cities, "Name"]],

PlotLabel -> Style[

"Gini Index Data: 10 Largest U.S. Cities", "Title", 24]]

|

And using functions like Manipulate, you can interactively explore additional parameters to find patterns in the data:

✕

With[{params = {

EntityProperty["City", "HousingAffordability"],

EntityProperty["City", "MedianHouseholdIncome"],

EntityProperty["City", "PopulationByEducationalAttainment"],

EntityProperty["City", "PublicTransportationAnnualPassengerMiles"],

EntityProperty["City", "UnemploymentRate"],

EntityProperty["City", "RushHours"]}},

Manipulate[

BubbleChart[EntityValue[cities, {"GiniIndex", y, z}]], {y,

params}, {z, params}]]

|

Documenting your project’s workflow can be equally important; a clear, detailed narrative gives real-world context to an analysis and makes it understandable to a broad audience. The combination of interactive, human-readable code with plain language properly organized creates what Stephen Wolfram calls a computational essay:

This kind of high-level document is easy to produce using Wolfram Notebooks, which let you mix code, text, images, interfaces and other expressions in a hierarchical cell structure. With built-in interactivity and live code, the audience can follow the same discovery process as the author.

Computational essays are typical of the kind of output produced by the multiparadigm approach. But sometimes you need more information with fewer words—say, a financial dashboard:

From there, you can send the notebook to anyone for interactive viewing with Wolfram Player. Or deploy it as a web form in the Wolfram Cloud, add permissions for your colleagues and set up an automated report. You could even set up an API so others can create their own interfaces. It’s all built into the language.

Every interface has its unique use for data scientists and end users. The Wolfram Language gives you access to the full spectrum of interfaces for analyzing and reporting on your data—and they can be made permanently accessible for interactive viewing from anywhere, making them ideal for sharing ideas with the wider world.

Automate from Start to Finish

Following these principles leads to an optimized workflow that includes every aspect of the data science process, from data to deployment. Taking it a step further, the multiparadigm approach uses automation as much as possible, both simplifying the task at hand and making subsequent explorations easier.

This brings us back to Wolfram|Alpha: an adaptive web application that accepts a broad range of input styles, automatically chooses the appropriate data source for a given task and runs optimized computations on that data to provide answers at scale. When viewed as a whole, the system exemplifies the multiparadigm approach.

For instance, take a question involving revenue and GDP:

In this case, the system must first interpret the natural language statement, retrieving the entities and functions necessary to compute the ratio of revenue to GDP during a given time period. But beyond having access to the right data, it must be able to bring those different data sources together instantaneously when a response is needed.

Another example is revenue forecasting:

On top of the steps from the previous example, this computation also uses automated model selection, in this case choosing log-normal random walks. And in both cases, the system returns additional information to the user, all organized in a high-level report.

Wolfram|Alpha can be used in this way for all kinds of analysis, at any scale, always using the latest algorithms and data—making the full data science process available through simple natural language queries.

A Better Approach to Data Science

Ultimately, the best insights come from augmenting human curiosity with intelligent computation—and that’s exactly what multiparadigm data science in the Wolfram Language achieves. The result is a scalable, start-to-finish computational workflow designed around human understanding and usability.

Making high-level computation more accessible leads to the democratization of data science, giving anyone with questions immediate access to answers. The multiparadigm approach is more than just a new method for data science; creating and sharing high-level tools for interactive exploration make possible a new generation of data science.

So what kind of insights does your data hold? There’s only one way to find out: start exploring!

Does Wolfram have a database of round lines ?