Why Wolfram Tech Isn’t Open Source—A Dozen Reasons

Over the years, I have been asked many times about my opinions on free and open-source software. Sometimes the questions are driven by comparison to some promising or newly fashionable open-source project, sometimes by comparison to a stagnating open-source project and sometimes by the belief that Wolfram technology would be better if it were open source.

At the risk of provoking the fundamentalist end of the open-source community, I thought I would share some of my views in this blog. While there are counterexamples to most of what I have to say, not every point applies to every project, and I am somewhat glossing over the different kinds of “free” and “open,” I hope I have crystallized some key points.

Much of this blog could be summed up with two answers: (1) free, open-source software can be very good, but it isn’t good at doing what we are trying to do; with a large fraction of the reason being (2) open source distributes design over small, self-assembling groups who individually tackle parts of an overall task, but large-scale, unified design needs centralized control and sustained effort.

I came up with 12 reasons why I think that it would not have been possible to create the Wolfram technology stack using a free and open-source model. I would be interested to hear your views in the comments section below the blog.

- A coherent vision requires centralized design »

- High-level languages need more design than low-level languages »

- You need multidisciplinary teams to unify disparate fields »

- Hard cases and boring stuff need to get done too »

- Crowd-sourced decisions can be bad for you »

- Our developers work for you, not just themselves »

- Unified computation requires unified design »

- Unified representation requires unified design »

- Open source doesn’t bring major tech innovation to market »

- Paid software offers an open quid pro quo »

- It takes steady income to sustain long-term R&D »

- Bad design is expensive »

1. A coherent vision requires centralized design

FOSS (free and open-source software) development can work well when design problems can be distributed to independent teams who self-organize around separate aspects of a bigger challenge. If computation were just about building a big collection of algorithms, then this might be a successful approach.

But Wolfram’s vision for computation is much more profound—to unify and automate computation across computational fields, application areas, user types, interfaces and deployments. To achieve this requires centralized design of all aspects of technology—how computations fit together, as well as how they work. It requires knowing how computations can leverage other computations and perhaps most importantly, having a long-term vision for future capabilities that they will make possible in subsequent releases.

You can get a glimpse of how much is involved by sampling the 300+ hours of livestreamed Wolfram design review meetings.

Practical benefits of this include:

- The very concept of unified computation has been largely led by Wolfram.

- High backward and forward compatibility as computation extends to new domains.

- Consistent across different kinds of computation (one syntax, consistent documentation, common data types that work across many functions, etc.).

2. High-level languages need more design than low-level languages

The core team for open-source language design is usually very small and therefore tends to focus on a minimal set of low-level language constructs to support the language’s key concepts. Higher-level concepts are then delegated to the competing developers of libraries, who design independently of each other or the core language team.

Wolfram’s vision of a computational language is the opposite of this approach. We believe in a language that focuses on delivering the full set of standardized high-level constructs that allows you to express ideas to the computer more quickly, with less code, in a literate, human-readable way. Only centralized design and centralized control can achieve this in a coherent and consistent way.

Practical benefits of this include:

- One language to learn for all coding domains (computation, data science, interface building, system integration, reporting, process control, etc.)—enabling integrated workflows for which these are converging.

- Code that is on average seven times shorter than Python, six times shorter than Java, three times shorter than R.

- Code that is readable by both humans and machines.

- Minimal dependencies (no collections of competing libraries from different sources with independent and shifting compatibility).

3. You need multidisciplinary teams to unify disparate fields

Self-assembling development teams tend to rally around a single topic and so tend to come from the same community. As a result, one sees many open-source tools tackle only a single computational domain. You see statistics packages, machine learning libraries, image processing libraries—and the only open-source attempts to unify domains are limited to pulling together collections of these single-domain libraries and adding a veneer of connectivity. Unifying different fields takes more than this.

Because Wolfram is large and diverse enough to bring together people from many different fields, it can take on the centralized design challenge of finding the common tasks, workflows and computations of those different fields. Centralized decision making can target new domains and professionally recruit the necessary domain experts, rather than relying on them to identify the opportunity for themselves and volunteer their time to a project that has not yet touched their field.

Practical benefits of this include:

- Provides a common language across domains including statistics, optimization, graph theory, machine learning, time series, geometry, modeling and many more.

- Provides a common language for engineers, data scientists, physicists, financial engineers and many more.

- Tasks that cross different data and computational domains are no harder than domain-specific tasks.

- Engaged with emergent fields such as blockchain.

4. Hard cases and boring stuff need to get done too

Much of the perceived success of open-source development comes from its access to “volunteer developers.” But volunteers tend to be drawn to the fun parts of projects—building new features that they personally want or that they perceive others need. While this often starts off well and can quickly generate proof-of-concept tools, good software has a long tail of less glamorous work that also needs to be done. This includes testing, debugging, writing documentation (both developer and user), relentlessly refining user interfaces and workflows, porting to a multiplicity of platforms and optimizing across them. Even when the work is done, there is a long-term liability in fixing and optimizing code that breaks as dependencies such as the operating system change over many years.

While it would not be impossible for a FOSS project to do these things well, the commercially funded approach of having paid employees directed to deliver good end-user experience does, over the long term, a consistently better job on this “final mile” of usability than relying on goodwill.

Some practical benefits of this include:

- Tens of thousands of pages of consistently and highly organized documentation with over 100,000 examples.

- The most unified notebook interface in the world, unifying exploration, code development, presentation and deployment workflows in a consistent way.

- Write-once deployment over many platforms both locally and in the cloud.

5. Crowd-sourced decisions can be bad for you

While bad leadership is always bad, good leadership is typically better than compromises made in committees.

Your choice of computational tool is a serious investment. You will spend a lot of time learning the tool, and much of your future work will be built on top of it, as well as having to pay any license fees. In practice, it is likely to be a long-term decision, so it is important that you have confidence in the technology’s future.

Because open-source projects are directed by their contributors, there is a risk of hijacking by interest groups whose view of the future is not aligned with yours. The theoretical safety net of access to source code can compound the problem by producing multiple forks of projects, so that it becomes harder to share your work as communities are divided between competing versions.

While the commercial model does not guarantee protection from this issue, it does guarantee a single authoritative version of technology and it does motivate management to be led by decisions that benefit the majority of its users over the needs of specialist interests.

In practice, if you look at Wolfram Research’s history, you will see:

- Ongoing development effort across all aspects of the Wolfram technology stack.

- Consistency of design and compatibility of code and documents over 30 years.

- Consistency of prices and commercial policy over 30 years.

6. Our developers work for you, not just themselves

Many open-source tools are available as a side effect of their developers’ needs or interests. Tools are often created to solve a developer’s problem and are then made available to others, or researchers apply for grants to explore their own area of research and code is made available as part of academic publication. Figuring out how other people want to use tools and creating workflows that are broadly useful is one of those long-tail development problems that open source typically leaves to the user to solve.

Commercial funding models reverse this motivation. Unless we consider the widest range of workflows, spend time supporting them and ensure that algorithms solve the widest range of inputs, not just the original motivating ones, people like you will not pay for the software. Only by listening to both the developers’ expert input and the commercial teams’ understanding of their customers’ needs and feedback is it possible to design and implement tools that are useful to the widest range of users and create a product that is most likely to sell well. We don’t always get it right, but we are always trying to make the tool that we think will benefit the most people, and is therefore the most likely to help you.

Practical benefits include:

- Functionality that accepts the broadest range of inputs.

- Incremental maintenance and development after initial release.

- Accessible from other languages and technologies and through hundreds of protocols and data formats.

7. Unified computation requires unified design

Complete integration of computation over a broad set of algorithms creates significantly more design than simply implementing a collection of independent algorithms.

Design coherence is important for enabling different computations to work together without making the end user responsible for converting data types, mapping functional interfaces or rethinking concepts by having to write potentially complex bridging code. Only design that transcends a specific computational field and the details of computational mechanics makes accessible the power of the computations for new applications.

The typical unmanaged, single-domain, open-source contributors will not easily bring this kind of unification, however knowledgeable they are within their domain.

Practical benefits of this include:

- Avoids costs of switching between systems and specifications (having to write excessive glue code to join different libraries with different designs).

- Immediate access to unanticipated functions without stopping to hunt for libraries.

- Wolfram developers can get the same benefits of unification as they create more sophisticated implementations of new functionality by building on existing capabilities.

- The Wolfram Language’s task-oriented design allows your code to benefit from new algorithms without having to rewrite it.

8. Unified representation requires unified design

Computation isn’t the only thing that Wolfram is trying to unify. To create productive tools, it is necessary to unify the representation of disparate elements involved in a computational workflow: many types of rich data, documents, interactivity, visualizations, programs, deployments and more. A truly unified computational representation enables abstraction above each of these individual elements, enabling new levels of conceptualization of solutions as well as implementing more traditional approaches.

The open-source model of bringing separately conceived, independently implemented projects together is the antithesis of this approach—either because developers design representations around a specific application that are not rich enough to be applied in other applications, or if they are widely applicable, they only tackle a narrow slice of the workflow.

Often the consequence is that data interchange is done in the lowest common format, such as numerical or textual arrays—often the native types of the underlying language. Associated knowledge is discarded; for example, that the data represents a graph, or that the values are in specific units, or that text labels represent geographic locations, etc. The management of that discarded knowledge, the coercion between types and the preparation for computation must be repeatedly managed by the user each time they apply a different kind of computation or bring a new open-source tool into their toolset.

Practical examples of this include:

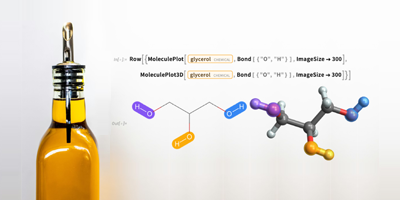

- The Wolfram Language can use the same operations to create or transform many types of data, documents, interfaces and even itself.

- Wolfram machine learning tools automatically accept text, sounds, images and numeric and categorical data.

- As well as doing geometry calculations, the geometric representations in the Wolfram Language can be used to constrain optimizations, define regions of integration, control the envelope of visualizations, set the boundary values for PDE solvers, create Unity game objects and generate 3D prints.

9. Open source doesn’t bring major tech innovation to market

FOSS development tends to react to immediate user needs—specific functionality, existing workflows or emulation of existing closed-source software. Major innovations require anticipating needs that users do not know they have and addressing them with solutions that are not constrained by an individual’s experience.

As well as having a vision beyond incremental improvements and narrowly focused goals, innovation requires persistence to repeatedly invent, refine and fail until successful new ideas emerge and are developed to mass usefulness. Open source does not generally support this persistence over enough different contributors to achieve big, market-ready innovation. This is why most large open-source projects are commercial projects, started as commercial projects or follow and sometimes replicate successful commercial projects.

While the commercial model certainly does not guarantee innovation, steady revenue streams are required to fund the long-term effort needed to bring innovation all the way to product worthiness. Wolfram has produced key innovations over 30 years, not least having led the concept of computation as a single unified field.

Open source often does create ecosystems that encourage many small-scale innovations, but while bolder innovations do widely exist at the early experimental stages, they often fail to be refined to the point of usefulness in large-scale adoption. And open-source projects have been very innovative at finding new business models to replace the traditional, paid-product model.

Other examples of Wolfram innovation include:

- Wolfram invented the computational notebook, which has been partially mirrored by Jupyter and others.

- Wolfram invented the concept of automated creation of interactive components in notebooks with its Manipulate function (also now emulated by others).

- Wolfram develops automatic algorithm selection for all task-oriented superfunctions (Predict, Classify, NDSolve, Integrate, NMinimize, etc.).

10. Paid software offers an open quid pro quo

Free software isn’t without cost. It may not cost you cash upfront, but there are other ways it either monetizes you or that it may cost you more later. The alternative business models that accompany open source and the deferred and hidden costs may be suitable for you, but it is important to understand them and their effects. If you don’t think about the costs or believe there is no cost, you will likely be caught out later.

While you may not ideally want to pay in cash, I believe that for computation software, it is the most transparent quid pro quo.

“Open source” is often simply a business model that broadly falls into four groups:

Freemium: The freemium model of free core technology with additional paid features (extra libraries and toolboxes, CPU time, deployment, etc.) often relies on your failure to predict your longer-term needs. Because of the investment of your time in the free component, you are “in too deep” when you need to start paying. The problem with this model is that it creates a motivation for the developer toward designs that appear useful but withhold important components, particularly features that matter in later development or in production, such as security features.

Commercial traps: The commercial trap sets out to make you believe that you are getting something for free when you are not. In a sense, the Freemium model sometimes does this by not being upfront about the parts that you will end up needing and having to pay for. But there are other, more direct traps, such as free software that uses patented technology. You get the software for free, but once you are using it they come after you for patent fees. Another common trap is free software that becomes non-free, such as recent moves with Java, or that starts including non-free components that gradually drive a wedge of non-free dependency until the supplier can demand what they want from you.

User exploitation: Various forms of business models center on extracting value from you and your interactions. The most common are serving you ads, harvesting data from you or giving you biased recommendations. The model creates a motivation to design workflows to maximize the hidden benefit, such as ways to get you to see more ads, to reveal more of your data or to sell influence over you. While not necessarily harmful, it is worth trying to understand how you are providing hidden value and whether you find that acceptable.

Free by side effect: Software is created by someone for their own needs, which they have no interest in commercializing or protecting. While this is genuinely free software, the principal motivation of the developer is their own needs, not yours. If your needs are not aligned, this may produce problems in support or development directions. Software developed by research grants has a similar problem. Grants drive developers to prioritize impressing funding bodies who provide grants more than impressing the end users of the software. With most research grants being for fixed periods, they also drive a focus on initial delivery rather than long-term support. In the long run, misaligned interests cost you in the time and effort it takes you to adapt the tool to your needs or to work around its developers’ decisions. Of course, if your software is funded by grants or by the work of publicly funded academics and employees, then you are also paying through your taxes—but I guess there is no avoiding that!

In contrast, the long-term commercial model that Wolfram chooses motivates maximizing the usefulness of the development to the end users, who are directly providing the funding, to ensure that they continue to choose to fund development through upgrades or maintenance. The model is very direct and upfront. We try to persuade you to buy the software by making what we think you want, and you pay to use it. The users who make more use of it generally are the ones who pay more. No one likes paying money, but it is clear what the deal is and it aligns our interest with yours.

Now, it is clearly true that many commercial companies producing paid software have behaved very badly and have been the very source of the “vendor lock-in” fear that makes open source appealing. Sometimes that stems from misalignment of management’s short-term interest to their company’s long-term interests, sometimes just because they think it is a good idea. All I can do is point to Wolfram history, and in 30 years we have kept prices and licensing models remarkably stable (though every year you get more for your money) and have always avoided undocumented, encrypted and non-exportable data and document formats and other nasty lock-in tricks. We have always tried to be indispensable rather than “locked in.”

In all cases, code is free only when the author doesn’t care, because they are making their money somewhere else. Whatever the commercial and strategic model is, it is important that the interests of those you rely on are aligned with yours.

Some benefits of our choice of model have included:

- An all-in-one technology stack that has everything you need for a given task.

- No hidden data gathering and sale or external advertising.

- Long-term development and support.

11. It takes steady income to sustain long-term R&D

Before investing work into a platform, it is important to know that one is backing the right technology not just for today but into the future. You want your platform to incrementally improve and to keep up with changes in operating systems, hardware and other technologies. This takes sustained and steady effort and that requires sustained and steady funding.

Many open-source projects with their casual contributors and sporadic grant funding cannot predict their capacity for future investment and so tend to focus on short-term projects. Such short bursts of activity are not sufficient to bring large, complex or innovative projects to release quality.

While early enthusiasm for an open-source project often provides sufficient initial effort, sustaining the increased maintenance demand of a growing code base becomes increasingly problematic. As projects grow in size, the effort required to join a project increases. It is important to be able to motivate developers through the low-productivity early months, which, frankly, are not much fun. Salaries are a good motivation. When producing good output is no longer personally rewarding, open-source projects that rely on volunteers tend to stall.

A successful commercial model can provide the sustained and steady funding needed to make sure that the right platform today is still the right platform tomorrow.

You can see the practical benefit of steady, customer-funded investment in Wolfram technology:

- Regular feature or maintenance upgrades for over 30 years.

- Cross-platform support maintained throughout our history.

- Multi-year development projects as well as short-term projects.

12. Bad design is expensive

Much has been written about how total cost of ownership of major commercial software is often lower than free open-source software, when you take into account productivity, support costs, training costs, etc. While I don’t have the space here to argue that out in full, I will point out that nowhere are those arguments more true than in unified computation. Poor design and poor integration in computation result in an explosion of complexity, which brings with it a heavy price for usability, productivity and sustainability.

Every time a computation chokes on input that is an unacceptable type or out of acceptable range or presented in the wrong conceptualization, that is a problem for you to solve; every time functionality is confusing to use because the design was a bit muddled and the documentation was poor, you spend more of your valuable life staring at the screen. Generally speaking, the users of technical software are more expensive people who are trying to produce more valuable outputs, so wasted time in computation comes at a particularly high cost.

It’s incredibly tough to keep the Wolfram Language easy to use and have functions “just work” as its capabilities continue to grow so rapidly. But Wolfram’s focus on global design (see it in action) together with high effort on the final polish of good documentation and good user interface support has made it easier and more productive than many much smaller systems.

Summary: Not being open source makes the Wolfram Language possible

As I said at the start, the open-source model can work very well in smaller, self-contained subsets of computation where small teams can focus on local design issues. Indeed, the Wolfram Technology stack makes use of and contributes to a number of excellent open-source libraries for specialized tasks, such as MXNet (neural network training), GMP (high-precision numeric computation), LAPACK (numeric linear algebra) and for many of the 185 import/export formats automated behind the Wolfram Language commands Import and Export. Where it makes sense, we make self-contained projects open source, such as the Wolfram Demonstrations Project, the new Wolfram Function Repository and components such as the Client Library for Python.

But our vision is a grand one—unify all of computation into a single coherent language, and for that, the FOSS development model is not well suited.

The central question is, How do you organize such a huge project and how do you fund it so that you can sustain the effort required to design and implement it coherently? Licenses and prices are details that follow from that. By creating a company that can coordinate the teams tightly and by generating a steady income by selling tools that customers want and are happy to pay for, we have been able to make significant progress on this challenge, in a way that is designed ready for the next round of development. I don’t believe it would have been possible using an open-source approach and I don’t believe that the future we have planned will be either.

This does not rule out exposing more of our code for inspection. However, right now, large amounts of code are visible (though not conveniently or in a publicized way) but few people seem to care. It is hard to know if the resources needed to improve code access, for the few who would make use of it, are worth the cost to everyone else.

Perhaps I have missed some reasons, or you disagree with some of my rationale. Leave a comment below and I will try and respond.

“This does rule out exposing more of our code for inspection. However, right now, large amounts of code are visible (though not conveniently or in a publicized way) but few people seem to care. It is hard to know if the resources needed to improve code access, for the few who would make use of it, are worth the cost to everyone else.”

– It is certainly true that “spelunking” can be done in some cases, where the vital parts of a function are implemented in top-level code. However, a frequent discomfort I hear about regarding the use of Mathematica is that the documentation on the methods being used (and thus references to them) is usually very scant. I think those people are justified with not being comfortable. Just because they specify Method -> “DifferentialEvolution” in NMinimize, or Method -> “Adams” in NDSolve, to give explicit examples, people are supposed to assume the literature method is being applied to their problem, when it may well be that there were subtle changes that yield different behavior from what was expected. With source that is open to inspection, as well as (or maybe just, at the extreme) good pointers to the literature, people might have more confidence in using these functions.

I also hear similar complaints about the curated data functions or even Wolfram Alpha’s results. Currently, people might use the results of a Wolfram Alpha query when prototyping, but will switch to more “official” sources in actual applications. People might be more reassured to hear if Wolfram Alpha says it got the melting point of tin it returned from e.g. the latest CRC Handbook, or somesuch.

There are a few more points, but they should probably be in another comment. This is just what came to mind when I read that sentence.

That is certainly a common reaction. I think one has to distinguish between the theoretical benefit of seeing the source and the practical benefit. Lots of people are reassured that they could look if they wanted to, but don’t actually look when they can. The effort required to become familiar with a large code base to gain useful insight is quite high. When we take on new developers they need time to reach that state before they are safe to make significant contributions. That is not to say that the “marketing” value of that theoretical benefit should be ignored. In practice when people have serious questions about how code works, often connecting them with the right developer answers those questions faster.

On the Wolfram|Alpha data, there is a link at the bottom of the page to sources, though we have not solved the question of how to display the specific source of an answer which might depend on combining several sources to produce a plot or compute a derived value. For example, the “melting point of tin” query lists element data as having the following sources ”

Audi, G., et al. “The NUBASE Evaluation of Nuclear and Decay Properties.” Nuclear Physics A 624 (1997): 1-124. »

Arblaster, J. W. “Densities of Osmium and Iridium: Recalculations Based upon a Review of the Latest Crystallographic Data.” Platinum Metals Review 33, no. 1 (1989): 14-16.

Barbalace, K. L. “Periodic Table of Elements.” EnvironmentalChemistry.com. »

Cardarelli, F. Materials Handbook: A Concise Desktop Reference. Springer, 2000.

Coursey, J. S, et al. “Atomic Weights and Isotopic Compositions with Relative Atomic Masses.” NIST—Physics Laboratory. »

Gray, T., N. Mann, and M. Whitby. Periodictable.com. »

Kelly, T. D. and G. R. Matos. “Historical Statistics for Mineral and Material Commodities in the United States, Data Series 140.” USGS Mineral Resources Program. »

Lide, D. R. (Ed.). CRC Handbook of Chemistry and Physics. (87th ed.) CRC Press, 2006.

National Physical Laboratory. Kaye and Laby Tables of Physical and Chemical Constants. »

Speight, J. Lange’s Handbook of Chemistry. McGraw-Hill, 2004.

United States Secretary of Commerce. “NIST Standard Reference Database Number 69.” NIST Chemistry WebBook. »

Winter, M. and WebElements Ltd. WebElements. »

The Wikimedia Foundation, Inc. Wikipedia. »

Also “This does rule out” was meant to read “This does not rule out”. (Fixed now)

Here are some reasons why our startup switched from Wolfram to python.

* the deployment options of Wolfram language are very limited, expensive and are actually a lock in. It is actually a paid software with the addition of the Freemium and Commercial traps.

* you can learn so much from others from sites like Kaggle

* very hard to impossible to hire someone who knows Wolfram, therefore very high costs in training

If Wolfram was free than the community would also grow and I could deploy my software wherever I want and have full control. So I really appreciate what Wolfram is trying to achieve and I am still a fan, but the direct and indirect effects of the paid model made it a bad choice for us.

Taking your points in turn:

* I don’t think your first point is a fair representation of deployment. The desktop route has always been – you pay for the development environment (eg Enterprise Mathematica) and you deploy for free using the Player. That is the opposite of freemium when it comes to deployment. For cloud deployment there are fees, though those are low for low demand in the public cloud but scale with use.

* Kaggle is a fine site. And while we have not tried to do what they do, we do put effort into community to help people get together and learn. The two main places are http://community.wolfram.com (hosted by us) and https://mathematica.stackexchange.com/ (independent but with many contributions by Wolfram people)

* This is an important challenge and we are putting more work into helping people learn (https://www.wolfram.com/wolfram-u/). But I think you also have to disinguish between language skills and whole platform. Knowing Python is not the same thing as knowing Python and the full set of libraries that one might need to assemble to solve a problem that Wolfram Language could address directly. If it is the Python coding skill and style then there is no reason to choose. You can access the whole of the Wolfram stack entirely from Python (https://pypi.org/project/wolframclient/). Coding skill problem solved! If you are talking about whole-stack knowledge, I think the recruiting gap is much smaller.

Thanks for your reply.

Deployment with the free player is very limited, since you can not access files or data bases, that’s way i thought of freemium. You get the very limited option with free CDF with the standard Desktop version and if you want something more useful you need to pay much more.

Yes the mathematica stackexchange community is very helpful. The http://community.wolfram.com lacked a search and was therefore useless for me in the past. Maybe its better now.

Overall, the underlaying problem remains if its paid the community stays small, therefore its harder to get answers and harder to recruit. Thanks for the info about wolframclient, this is really interesting and I will give it a go.

Player isn’t limited when the content is created in Enterprise Mathematica. However, I accept that that very answer is a encroaching on the same ideas as Freemium, and is sufficiently complex that it doesn’t meet the highest standards of transparency that I would advocate. (And I must take some of the blame, as I was involved in those decisions at the time). There are some plans to streamline definitions to make them more transparent, the first step of which is to remove the distinction between CDF and NB in version 12.

Your central point about needing to grow the community is well made, where we have more to do. One imminent small step will be a simplified process and presentation of free cloud accounts, since not enough people are discovering the free options at https://www.open.wolframcloud.com/

Yes make the free option more prominent, and streamline the whole licensing. Maybe point students and hobby users there and invest in the long term growth instead of some quick $. Anyway thanks for this blog post and your answers and the effort for more transparency.

And yet there is one very important reason why it should be open source: scientific reproducibility.

All of the reasons you give seem to address the fact that the tech isn’t free, but none directly address the openness. If you look at the way Apple releases darwin and webkit code, you will see that open doesn’t necessarily mean free and non-commercial. Same thing goes for the success of redhat or android, which are open source, but corporate-backed.

Thus said, if these are the best reasons Wolfram Research can officially come up with for not being open source, I think we can expect some parts of the language becoming open source soon.

Oh no wait, I forgot, most of the language is already open source: all the functions written in Wolfram Language have inspectable definitions (look it up on stackexchange). We just need Wolfram Research to release the ones that are written in C.

Yes, I had forgotten to mention the business of reproducibility, thank you for bringing it up. This is related to my statement about being assured that Method -> “Something” is really doing Something’s algorithm on whatever problem you fed it.

I see now that “this does rule out” in the original version of this entry was a typo. This I am hopeful about. In that case, I am sure a way can be found where yes, people can (and should) still pay for things like support, but that the software itself can still be made transparent to scrutiny.

Scientific reproducibility has little to do with Wolfram Language in my opinion. If you integrate some function it gives a response. You don’t need WL to verify, can be any language or book…

Moreover, at least in physics, i think the reproducibility is much more in the experiment rather than in the data-analysis. I have never heard of reproducibility issues with the data processing…

Yes. My comments were not really directed at the commercial corporate projects where the code is opened as a last step. Sometimes that is because the code is of no real value (the company is monetizing something else in the chain). But there is the question that I referenced briefly in the penultimate paragraph and in response to Joe Boy (the first commenter) about potential value in exposing code as an output of our commercial model. The notion of academic reproducibility is an extreme illustration of the “theoretical vs practical value” of seeing code as a means of understanding what is happening. One bad consequence of fully intergrate computation, is that often the way that WL computes something is much more complicated than a simple library version of a capability. Because we can call on lots of sophistication, we often do, making it all the more challenging to understand from the outside. To truly know how it works, there are potentially millions of lines of code, the code of all libraries and operating system operations, there is the code for the compilers used to turn source into machine code and then there is how the machine code executes on the CPU. A failure in correctness can occur at any stage, or just because you got unlucky and cosmic ray flipped a memory bit as it executed (that happens).

It conflicts with our training in maths and science, but one has to accept that you will never know exactly how your computation is done, even in open source software, unless you dedicate your life to it (such as working for us).

Personally, I think the thing that matters more to scientific reproducibility is that the documentation should be clear about what is supposed to happen. I was always taught in maths, “show enough working that someone else could verify each step”. To me WL code isn’t proof, it is the description of how you arrived at your answer. If the documentation is clear enough, someone else should be able to reproduce your answer using non -WL code. (Though, again, that is a theoretical task more than a practical one, because if we are doing our job right, the WL code should be much simpler than the alternate implementation). Our documentation isn’t always good enough, but I think we don’t do badly and it is generally getting better.

As I said in my blog. That is not to say that we shouldn’t expose more code, but there are costs. My feeling is to spend that money on development rather than what I suspect is a marketing benefit more than a practical one. But I have added your vote to my mental tally for what the community wants!

I would disagree with this statement that it is not reasonably possible to show decisions are being made behind the scenes to perform your calculation. I envision a new type of Trace function that returns a graph as ProofObject[“ProofGraph”] does. The “ProofGraphs” can be very complex and they are analogous to the decision process behind the scenes in selecting which branches of algorithms to test for selection and which was eventually selected.

Well, certainly you can show them, and all that information is available to you. But perhaps what I should have said is that it is not realistic for the human mind to be able to hold that information in a way that gives your reasonable insight.

A ProofGraph like view on code flow might be possible. I suspect the main challenge is showing the right amount of detail.

I have never found a use for the one-argument form of Trace. Anytime that I have applied it to a non-trivial problem, I get so much information back that I need to write a program to analyze the answer.

I’m gonna throw this out there from a development oriented perspective: OSS is nice because when WRI screws up in kernel-level code or makes a suboptimal product there is no recourse for figuring out a) why it’s broken and b) how to fix it. OSS allows us to fully inspect and potentially even contribute a fix.

One other thing: Mathematica couldn’t even thrive as OSS because its developer tools are highly lacking. We in the external Mathematica dev community can try to fill this gap as much as is possible but with all of the inconsistencies in the design of the language, the feature bloat leading to poorly implemented functionality, and just the general lack of performance of a lot of key pieces of the software we often hit dead ends that we’re not able to work around.

Mathematica is a fun language, but I have to say writing big code in it causes a mental strain that just never appears with python.

Also the many, many, valid complaints from a prominent member of the Mathematica development community here: https://chat.stackexchange.com/transcript/message/49763393#49763393

It is really only the software world where there is a distrust of closed technology. I trust my life to cars, and planes that are entirely closed to me, our very survival depends on electrical networks that we have no public inspection of, I allow myself to be irradiated by medical devices that I can’t examine…. The difference is, that there is a small fraction of software users who feel that they have the knowledge to be able to make use of that information. I suppose a small fraction of air passengers know enough about plane design to understand a 747s architecture, but none of them feel that they could fork Boeing if it ever went bust.

While there is a theoretical independence from Wolfram if it went bust or took a crazy development direction, having to take over responsibility for maintenance is almost as disastrous. I think the real practical value is as a threat to the owners to behave. I accept the value of that, as there certainly have been companies that have behaved badly and perhaps would not have if customers had had the walk-away power of source. All I can point to is 30 years of good behavior as a predictor of future behavior.

That was meant to be a response to Bill Mooney below…

What you say is partly untrue, partly really worrying.

The part that is false is your car analogy: once I buy the car, I own it and I can go service it wherever I want or even fix it myself. The same is patently false of closed software.

The part that is worrying is that you are comparing users of a programming language to passengers on a jet plane. That analogy might work for a videogame, maybe even for a computer algebra system, but users of a programming language are engineers and want to understand the tools they work with. And I think this attitude is the main reason why Mathematica is struggling to cross the chasm between computer algebra system and general purpose programming language.

Well you own the physical object of the car, but you don’t own the brand or designs or the circuit diagrams, or the simulations that explain what will happen when you modify it. You can only really fix it yourself to the extent that you can buy components that the manufacturer has chosen to make available or standardize/document the interface to or to the extent that you can reverse engineer it. The bigger problem with the analogy is that “fix” for a car means replace parts. Hardly anyone can “fix” their car by improving the design of the engine.

My impression is that this issue only really matters to relatively sophisticated developers who are capably of reading a language’s source code, but that is not a practical option for most people. What fraction of Python users have ever even opened its source code, let alone tried to fix something. I would be surprised if it were anywhere near 0.1%.

What gives people reassurance is that “someone else” can fix it for them. The question is a large number of others like themselves, is more reassuring that a small number of others that they are paying?

The utility of an open-source language goes far beyond the simple ability to “fork” a language repository. You’re right that most people would not have the skill to fix problems in the language implementation, but that’s only a small part why being open is useful. I program primarily in Scala/Spark for my day-job, and the ability to debug/trace into the language implementation (both Java and Scala source) is invaluable. One can inspect the values of variables at any level of the call stack and see what assumptions are built into the code even if one has no intention of actually modifying the language. I can fix issues with my inputs when I understand what is happening “under the hood.” I have learned a lot about how to write software (and how not to write software) by having the ability to understand every nut and bolt in my build. That’s why I get paid. I keep hearing a lot of chest-thumping about how WL is a ground-breaking general-purpose productivity language, and I admit (as a long-time paying user) it is really interesting. But, to be frank, it will rarely be used as such by professionals (in this day and age) as long as it is closed. Much as I like Mathematica for prototyping and data exploration, I would never trust it to build a high-throughput production-ready data-processing stack at my company running at scale. Respectfully, the days of developers embracing black-boxes (like they might do for a car) is over.

I am not sure the “30 years of good behaviour” actually applies. I use Mathematica a fair bit, but I steer my students to Python, to reduce the risk of lock-in — I love Mathematica, but find Wolfram’s pricing policies to be designed to encourage lock-in.

Wolfram is fantastic in the sense that code I wrote 20 years ago likely still works today, and providing a fantastic mix of computational paradigms in the same box — it is truly impressive.

However, I am not actually convinced it is a company I would trust in the long term.

I have seen a number of examples in the stack exchange where its users have shown that a built-in function can be handily outdone by user-made functions both in speed and accuracy, thus suggesting that insufficient development and/or QA time were spent on those functions. A user would reasonably think that something she or he is paying(!!!) for would have things that are, if not the best, very nearly the best, and yet here we are. Having code open for inspection would have helped avoid such awkwardness.

I think embarrassing us with a better implementation and then reporting our failure to us as a bug is probably at least as effective a remedy as reading the code to understand what we did wrong (though that might add to our embarrassment!).

(Sometimes we prioritize a method that is better overall but not the best in specific cases and lacks an good test for algorithm switching, but I expect that is not the explanation for most of the examples you are referring to).

For me, open source is more about intellectual property rights, than about architecture and control. From the outside looking in, it appears that you have made some decisions made on some bad assumptions of what open source software has to be. I could argue your points one by one, but I think it’s more important to address the key factor here: The market is inherently distrustful of closed technology systems.

I do believe the scope of what Wolfram is trying to do is massive and important, but given the barriers to using the technology, it will be hard to extend it’s market. Worse is still better.

Personally, I do not really have a problem that Mathematica is not Open Source. Your points are understandable and it is completely valid for a company to go down that road.

However, there is one point, though, which is a mystery for me that you don’t follow a more open approach: a public issue tracker. One of the biggest problems of the Mathematica ecosystem is, IMHO, the huge amount of open bugs and glitches. But what is worse, there is no central accessible place which keeps a list of all these problems and their current state. If there were, we would at least know if our problem is known and someone is working on it. Currently the information is spread around everywhere: forum, stackexchange, or, worst of all, individual contact with the support team (because then all the other users with the same problem do not have the information).

This is not even a new idea. Many other companies apply this strategy successfully (e.g. Microsoft Visual Studio, JetBrains, …). I really think that this could also be a huge benefit for Mathematica and its users :-)

Jan – I haven’t been involved in any of the decisions related to public bug tracking, so there may be issues I don’t know about but my main concern would be if it were to actually slow down or constrain the conversation. If contents had to be publicly accessible, would it take longer to avoid the insider jargon that our bugs database is full of, and would a developer feel unable to express the severity or inept cause of a bug knowing that they might be quoted out of context? It does feel like the world is more mature in its attitude to bugs than when I started in the 90s.

Then if you admitted that there were bugs some people would instantly demand refunds or claim that it was a trick to force you to upgrade. Even today in a Reddit discussion about this blog, someone was quoting a bug that was closed 5 years ago to discredit my comments.

I suspect that the benefits would outweigh the costs, but there are costs. But as I said, it isn’t my field.

Hi there. I think this is a very well thought out argument. My issue with it is that it is contradicted by reality. I mean, there are numerous examples of open source products that do just what you try to disprove possible… projects with a sustained, long-lived, constant development, world-wide adoption, quality, complex, and so on.

So while an interesting intellectual exercise, it fails to adhere to reality.

Nevertheless it got me thinking why is it so… that would be an article I would also read.

Jon – Very interesting read with some great points. Also, very brave of you to touch on this subject!

Thank you for the great post Jon. I agree with Wolfram’s direction. Keep up the great work.

While some of the above commentary might be OK to justify for mostly industry solutions, when it comes to academic or non-profit research the Wolfram Language is beginning to lag behind. The US Federal funding agencies are highly unlikely to fund research for which the majority of users or potential developers and users cannot obtain free licenses. In fact peer reviewers typically negatively evaluate such proposals, so developing in the Wolfram Language, however wonderful to code in leads to an inherent funding disadvantage. Hence the success of Python and R for data science and federally funded research. If Wolfram was intending to keep up with these developments, then rethinking the license model is essential: i.e. free licenses should be provided for academic and non-profit organizations. Otherwise, we cannot in these environments continue to develop software solutions in the Wolfram Language without funding, and given that Wolfram itself does not have any funding mechanisms to encourage connections to the non-profit/academic researchers. Keep in mind the payoff: students or researchers moving into the for-profit sectors, once they use Mathematica are more likely to continue using it. However right now, most data science and quantitative mathematical science teaching is shifting to using R and Python in the classroom – and this will have a severe detrimental effect to the Wolfram user base in the coming years.

In academia our focus is on “free at the point of use” and we are making good progress towards this.

There are a very large number of universities (at least in developed countries) where Mathematica is licensed on an unlimited basis. Unfortunately not all universities pass this model on to the staff and students by internally charging cost recovery fees per install, but we are working to encourage unrestricted access.

We are also being much more open to whole-system arrangements. Perhaps the biggest is Egypt where every single student, academic and teacher in the country has free (to them) access to Mathematica (probably about 40 million people). I would like to see more such arrangements.

With the free Wolfram Cloud access options (soon to be simplified) limited access is free to essentially everyone.

The case needs to be made in the funding applications, and this seems like something we should help articulate.

Please do NOT o/s the Mathematica system. EVER. Instead, WRI should be more amenable to user feedback/suggestions, but in the end, still retain all decision rights. I’ve seen a quarter century of *horrible* battles between total idiots (individuals and entire groups) about how to “improve” software, and which strategic direction to go, which in the end made bad software worse. In a way, it’s understandable because different people have different expectations, ideas, and visions. No criticism here. I know what I write here won’t be a popular opinion, because “diversity” and “inclusiveness” are currently the mantras du jour, quality and consistency are not. Then there are the communication and decision-making / committer issues. Endless debates and fights, because people cannot agree, get stubborn and petulant, hasty commits, and the M system is not something that can easily change directions or “fix” things, because there are some things called “kernel integrity” and backwards-compatibility. “Decision by committee” is not accidentally used to criticize useless blahblah groups. As long as my suggestion is duly noted and not ignored (but can eventually be discarded as a bad suggestion), I’ll be satisfied. I don’t need to “participate”, and I don’t have the time to do it. But I need a powerful system, and PLEASE don’t water it down by too much participatory inclusiveness / diversity. People can make their very diverse suggestions known through the feedback/support mechanism. As long as people actually *deal* with it (and not just drop it), then there is diversity of thought. And we do *not* want participatory inclusiveness in the development of the M system. We need this to WORK WELL!

I see enough developer debates to know that user feedback gets through and is considered.

The impression I get, however, that we don’t get that much of it and have to search for some of it in external forums. But my guess is that people feel that sending suggestions will be ignored, and probably the first line response from support of “your suggestion has been forwarded to our development team” is assumed to be a brush-off.

This contrasts with what I see at the annual Wolfram Technology Conference where it is hard to take the volume of feedback that one gets in face-to-face conversations.

I don’t immediately know how to be more inviting when not face-to-face.

Sorry, I didn’t mean to express that it *does not happen*. I merely said that it *needs* to happen. If it does, all good! And that’s all I ask for. The only *other* thing I ask for is that you do *not* o/s the M system! (ouch you’re working late, 21:15 in London now?)

Just to avoid doubt, this blog was not a pre-cursor to some big announcement! Just an opinion piece.

Consideration of user feedback is real. I have seen my comments/suggestions to WRI Support and at WTC actually become implemented on more than one occasion.

WRI can have all decision rights about Mathematica even if Mathematica is open-source. It doesn’t have to listen to the community or take commits to the code.

I have used Mathematica for almost three decades at academia and during 22 years of system and product engineering and it is still my favourite software tool now in the new shape of Wolfram One. My main point in this discussion is that if we value time as money with a reasonable conversion factor, then the Wolfram Language is as close as you can come to free software, because if you can solve it within Wolfram it will definitely take less time than fiddling around with python, matlab or root packages/libraries (F# is fun too but lacking the built-in knowledge and algorithms).

Then it is also very obvious that there is still a long way to go from solving an engineering challenge with Wolfram capabilities to generate “production code” for mass-produced embedded products. Maybe new compiler technology in the next release is a silver lining? I keep my fingers crossed.

Last (but maybe least) when could we have an interactive way to zoom into a 2D plot with the mouse only?

…three decades, first at academia and then during 22 years…

The initial version of the new compiler is landing in version 12- keep an eye on the blog.

There is a mouse zoomable plot at https://resources.wolframcloud.com/FunctionRepository/resources/DragZoomPlot

You won’t be able to use the ResourceFunction until version 12, but you can download the notebook now.

Wow, you are really reinforcing my point, fast-track to solutions! Thanks a lot for info and I am looking forward to version 12 release.

Well, from my own experience, once I start prototyping my ideas in Mathematica, there is limited freedom for me to choose my tech stack. It seems that if you have done one thing in MMA, then you should probably do all the things in MMA. It is like a honeypot, where you enjoy the part it benefits you, but you need to bear all the other parts which MMA is not good at. Thus, I am quite the opposite of relying on a huge system like Mathematica when choosing my toolchain for industrial usage. There is no such silver bullet that unifies the design while keeping everything smooth and fast. As you said, MMA is a multidisciplinary software, but not all the users are interested in all the subjects that MMA deals with. They might just need a few new features and old bugs fixed in a specific area. It is not reasonable for them to deploy the gigabytes of the environment and wait for a major update every two or three years. A more efficient and economical way for them is to decoupled things into modules where the bottleneck may be quickly positioned and replaced by a better substitute. In the open-source world, users and developers enjoy the “combos” of different tech frameworks. One of the biggest reasons is they are capable of upgrading any “weapon part” in their hand for a specific purpose quickly, instead of sticking to a corpulent system and waiting for its evolution.

Also, unified representation seems a joke in Mathematica to me. Wolfram Language is a dynamic pattern-based lisp-like language, it is flexible and expressive. It has dozens of programming paradigms, which enables developers to choose whatever they like to represent the data. We have seen the encapsulation in object-oriented form, like `Graph`, `CellObject` and `NotebookObject` that use `SetProperty` or `PropertyValue` as the interfaces. Meanwhile, we may encounter `SocketObject`, `InterpolationFunction` and `LinearModel`, whose interfaces are bound to their `DownValues`. There is no official code style guideline in WRI, even the packages that are maintained by the same team has different data representations because too many developers have touched it during the thirty years and they have different preferences in implementing the packages. Take the packages which are open-sourced and easy to find in the installation folder of MMA for example, they apparently have completely different design patterns and not as unified as you wrote in the blog. This is how the Wolfram language impresses people. It allows difference and freedom for developers to interpret the same idea. So personally, I am not a big fan of the centralized decision and unified design.

I am not requiring WRI to open-source the entire MMA or other products. I am just suggesting that they should be more opened and more developer-friendly. We do not care as much about your commercial operations as you are, but we do concentrate on technical details. I recall a lecture about the parallel computing given in Wolfram U. the lecturer said that usually `Table` is faster than `Map`, but sometimes it is not. And sometimes you may want to try `PackedArray` for better performance. He presented this in such an uncertain way, just like the response you get from Google or other third-party communities such as StackExchange. It would be fascinating for WRI to publish a technical manual, besides the documentation that mainly deals with the basic usage of the functions. As an engineer, I may concern about the metalanguage details such as the memory model, evaluation strategies, operator precedence, and interpreter implementation. There are commercial secrets behind the algorithm, but for the core language part, they are so basic, and not necessary to hide from developers.

Also, as I stated earlier, it is good to break things down into modules. The fact that the backend (kernel) and frontend (notebook) are entangled so deeply, is really an obstacle for developers who want to implement efficient packages. For example, the `Rasterize` function is implemented in Frontend, it takes several seconds for the kernel to send the data to the frontend and get the result back. Also calling `Export` function on images will start a frontend on the background. This kind of twisted kinds of stuff happens in many places in MMA. The pure pattern test (like head checking for _SocketObject) will automatically import “zeromq” packages which take up a few seconds, even if you do not need them. Modularization not only benefits developer, but also the business. You may divide the packages into different bundles, and sell and upgrade them separately.

I want to separate modularity as an implementation issue from modularity in the user experience. Good modularity in implementation is sensible, and done poorly is bad. Some of the problems you describe are caused by modularity (not having Rasterize in the kernel, loading libraries when less common functionality is needed) but it hasn’t been done as well as it could be (loading ZMQ if it wasn’t needed, and having modules like the FE that are too big). (There is a long term project to re-engineer the FE but it isn’t in the near future).

But the idea that you should break up the user experience into independent components is, I think more problematic. It is already problematic enough to have to worry about whether your code uses version 11.3 features that won’t work if you send it to an 11.0 user. But making it so that the required versions are a list of individually versioned components is unmanageable. Even if you think you are only touching three modules, because of the way that we integrate all of the functionality to be able to make use of the rest, the result would probably be a dependency tree that ended up upgrading everything anyway.

The idea that you don’t need all of the Wolfram Language is true, but breaking it up is problematic. Years ago we listed to users that said “I only want to use 10% of Mathematica, can’t I get a cut down version that is cheaper?” I led the development of CalculationCenter that addressed that demand. When we took it to market, lots of people said “If it just had feature X, I would buy it” but unfortunately X was different for every customer. Modular purchase would destroy sharing. At least “you need Mathematica or Player to run this” is easy to explain. Having to list a set of toolboxes is not.

On the gigabytes of download- there is a discussion going on about re-creating a “no documentation” build where docs would download on demand or be web based only. That would cut out more than 50% of the installation size. Not for version 12 though.

I’d say there is little point to open source the kernel to the public, as there won’t be many people being able to read/review its code without devoting all their time into it. But OTOH it would be a great idea to invite certain people outside WRI to access the code (like Microsoft’s MVPSLP Program).

And as an intermediate developer, without the need of kernel source access, what I really wish is a more friendly package develop environment: a stable and documented-in-detail package building and distributing system, an official Wolfram language specification, a promise on which functions are subject to long term stability. And maybe a collaboration system to let WRI participant 3rd party package developments so to make sure there is no conflict between any important 3rd party packages and the company’s grand design. That way, for WRI there won’t be risk of compromising own design. For the developers, without worrying their work being suddenly incompatible with latest WL, they can really get encouraged and enjoy participanting the establishment of an ecosystem.

That said, I think WRI can and should totally keep the solo power of deciding the future path of WL. In the meanwhile it will definitely be good to have a bridge / portal between WRI and the developers / users as open as possible. We are not just sit and wait for whatever ships from WRI, we will also be able to contribute during the decision making stage, from *our* view of aspect.

Lastly, hopefully regular users’ desire would get pass to WRI through the words of developers.

Talk more with developers. That’s my two cents.

For larger projects we do have a partnerships team who are supposed to help with some of those issues. The fact that I can’t immediately find the email address suggests we don’t publicize it enough. But mail into info@wolfram.com and ask for the message to be forwarded to them and it should get through.

I believe that there is a plan to clean up and document the Paclet mechanism that we have long used for package delivery and updates, and create ways for you to deliver through that. Not sure of the schedule.

We will imminently have a simplified mechanism that is meant for single-function delivery. It will initially only support free functions with source code. You can see it at https://resources.wolframcloud.com/FunctionRepository

Right now it only contains a few hundred functions submitted by Wolfram people, but with version 12, it will be open to user submissions.

I learned again and again that using software where I can’t fix a problem that interferes with my work is simply not worth my time. Sorry but I can’t trust it, and without a possibility of fixing or working around bugs I’d rather look for alternatives.

With this hostility to open source, I’m orphaning today the support for Mathematica fonts in Debian-based distributions (Ubuntu, Devuan, Mint, …) I had been maintaining, If someone is willing to pick it up, now is the time. Otherwise, I’ll file for removal shortly.

I’m sorry you feel that this blog was hostile, that wasn’t my intent. As I said in the article, I think FOSS development is very good at some things, but I wanted to explain why I don’t think it would work for what we are trying to do.

If there is information you are missing about the fonts, please just ask for it.

I’m a theoretical physicist, and I use computer algebra very intensively for calculations of multi-loop Feynman diagrams. I use Mathematica(and find it very powerful), and also Reduce (I use it from 1978, it is much more efficient than Mathematica in many cases), also some other free systems. What I see when I compare Mathematica with various free software programs:

1. Bugs in Mathematica are not fixed for many years after thay have been reported. Users who report bugs get no feedback from the developers. Any free software project has a public bugzilla, where bugs can be reported and discussed. Mathematica is closed, and users are helpless. Just try

DiscreteRatio[Sin[x],x]

This elementary bug has been reported long ago, and still is not fixed.

New bugs are introduced all the time. A moderately complicated calculation (about 10 minutes I think) which had run successfully in Mathematica 11.0 leads to a silent dying of the Mathematica kernel in 11.3. As a result, my college (who has paid for the upgrade to 11.3!) had to downgrade to 11.0.

3. In many cases, bug reports from users have the form like: this integral was being calculated correctly in the version x, but produces a wrong result in the version x+1. In any free software project I know developers would immediately do git bisect and find the offending commit. If, after this commit, some previously correct integral has become wrong, this commit is, at least, suspecious. And developers would try to refine it. In Mathematica, nobody fixes these bugs, and the integral continues to be wrong in versions x+2, x+3, … ad infinitum, for many years.

Sorry, I meant DiscreteRatio[Sin[Pi*x],x] of course

I am told that this bug is fixed in V12

I’m not qualified to comment on the specific bug. But I will just make one general point when we are in the space of things like Integrate. While many regressions are just mistakes that should be corrected quickly when traced, sometimes they are victims of a net improvement. In order to fix a more important problem (a more common integral, or a large class of cases) it may be that the sacrifice is breaking a less important or smaller class. Conversely, when the fixes for integrate bugs cause a net deterioration elsewhere, we don’t include them. I think this is perhaps intrinsic in problems that are implementing at or close to the limit of human knowledge on a topic.

I have similar concerns. Sometimes, Mathematica works like a black box without telling the user how certain things are implemented and to really effectively and correctly use Mathematica programming for research, I really need to know a little more how some functions are implemented. Often times, when the topic is esoteric enough, it is really hard to get qualified people to discuss with you. For example, https://community.wolfram.com/groups/-/m/t/1616638. It seems sometimes, there needs to be one-on-one online or on-the-phone communication channel between the relevant Wolfram developer and the user.

I understand that there is a plan to have more real-time support. But that only half answers your request as our support people are only expected to answer “regular” questions, and the really in-depth problems have to be escalated to the developers, who will not be in a public-facing role, so that they can spend most of their time “developing”.

While community.wolfram.com and mathematica.stackexchange.com are both great and quite a few of our developers contribute, we monitor them as a formal process. So you should send questions or bug reports into support@wolfram.com where they are tracked. It doesn’t guarantee you the answer you want, but you shouldn’t get ignored.

Hello Jon,

This blog post confirms several ideas and attitudes within Wolfram Research that I always suspected, and which are very concerning to me. I have invested very heavily into Mathematica/WL, including putting countless hours into the development and maintenance of several packages. Thus I feel that I need to respond.

I am less interested in whether Mathematica should or shouldn’t be (F)OSS. What I am concerned about are several opinions that you expressed above. I do want to note though that it seems to me that you conflate making the source open with how a project is governed. Yes, many or most FOSS projects are driven by community-contributions, and I agree with many of the points you make about what’s wrong with that. I am also in the (probably) minority that would agree with your point 9, and I acknowledge the many innovations that came out of WRI. I, as well, have pointed out many times in the past how community-driven projects do not seem to be capable of producing true innovation, and will mostly just copy an often inferior (but popular) concept. E.g. most competitor systems just copy MATLAB’s IMO inferior linear algebra approach. I also remember many conversations I had about the notebook workflow before Jupyter/ipython became popular. People just didn’t get it, and the typical response I got was that this is not necessary, redundant or even inferior, or “we already have report generation” (which is not the same thing). Look at how everyone is using Jupyter now, often without even knowing where the notebook idea originally came from!

With that out of the way, let me take some points you made (quotes are marked with three ” signs). Some are very concerning as WRI approach has exactly the opposite practical effect that you claim, while open source project manage much better. This should not happen!

While reading the below, please keep in mind that it is meant as constructive criticism that comes from an avid fan of Mathematica who hopes to be able to continue using it for a long time to come. I would not take the time to comment if I did not care.

“””

Your choice of computational tool is a serious investment. You will spend a lot of time learning the tool, and much of your future work will be built on top of it, as well as having to pay any license fees. In practice, it is likely to be a long-term decision, so it is important that you have confidence in the technology’s future.

“””

This is a very good point, and it is, unfortunately, precisely the reason why I often feel like I should jump ship as my over-reliance on Mathematica makes me vulnerable. I am on the brink of losing confidence in the technology’s future.

“””

Because open-source projects are directed by their contributors, there is a risk of hijacking by interest groups whose view of the future is not aligned with yours.

“””

Well, this is exactly what happened to me when I bet on Mathematica! With open-source project I at least have some influence or can contribute to mould it to my needs (this is not theoretical, I have done this!)

Mathematica promised to provide usable graph theory or network analysis functionality. Then the development of Graph was simply abandoned. This is not admitted publicly, but it is as plain day to someone like me who tries to use this functionality regularly. No new functionality of substance was added since v10.0 (PlotThemes fo graphs don’t count), and the countless very serious bugs are not getting fixed, or are fixed only very slowly. Responses relayed by support are either not helpful, or are plainly refused. To be fair, 12.0 (which I had the opportunity to beta test) has fixed more practically relevant graph-related bugs, than any previous version since 10.0, but the general functionality area is still in a very sorry state with many more unaddressed issues than in other parts of WL.

How do I deal with this? I started my own network analysis WL package (IGraph/M), originally as an interface to the igraph library, then with many more functions added independently.

Can you convince me that I am not being extremely foolish for still making my network analysis work dependent on WL after Wolfram has left me high and dry? At this point, it is hard to bring rational arguments, even though I very much WANT to be convinced.

Compare that with open-source: igraph’s original authors practically no longer contribute. But I _can_ contribute, I can fix things, I can implement new algorithms, and they are all accepted. I try to eventually contribute everything I do back to the open-source igraph (which also has a Python and R interface) and NOT just my IGraph/M WL package because I am no longer confident that Wolfram will support me in the future. I no longer have much confidence in the technology’s future.

“””

The theoretical safety net of access to source code can compound the problem by producing multiple forks of projects, so that it becomes harder to share your work as communities are divided between competing versions.

“””

It’s not a theoretical safety net, it’s a real one that I am relying on, as I explained above. And if igraph’s maintainers disappeared from the planet tomorrow, I could fork the project and would lose no prior work.

What if Wolfram goes bankrupt? I can’t say the same.

Also, community fragmentation from forks is not a common thing at all (though I am well aware that at least one FOSS project that WRI relies on was affected by this).

“””

Minimal dependencies (no collections of competing libraries from different sources with independent and shifting compatibility).

“””

This way of thinking is one of the major issues I have with Wolfram. Packages are not bad, they are good: both structuring Mathematica into packages and encouraging a healthy third-party package ecosystem.

For some reason, Wolfram is almost hostile towards package developers. Functionality that would be critical for package development are not added, or are hidden in undocumented contexts like Internal. You refuse to use namespaces, everything must go in System, which is a surefire way to regularly break compatibility with existing packages.

Not only that, but you are also shooting yourself in the foot: You talk a lot about design, and the importance of good design, while making some extremely questionable choices that go against everyone else (such as that namespaces, or “contexts” are helpful).

Did you know that the design of Graph is just broken, and can’t be fixed? The API is such that it will never be possible to work conveniently with multigraphs, and it is plainly impossible to assign edge properties to them. This is _critical_ for practical network analysis (what network scientists do) and also important to certain branches of graph theory (what mathematicians do).

I pointed this out many times through many channels (including support) and got exactly zero feedback from the developers. No wonder, what could they say?

Maple, a system that embraces namespaces/packages, has already replaced its original graph package with a new, better one, while also providing access to the old package. They could easily do it in the future because their system is structured into packages.