The Semantic Representation of Pure Mathematics

Introduction

Building on thirty years of research, development and use throughout the world, Mathematica and the Wolfram Language continue to be both designed for the long term and extremely successful in doing computational mathematics. The nearly 6,000 symbols built into the Wolfram Language as of 2016 allow a huge variety of computational objects to be represented and manipulated—from special functions to graphics to geometric regions. In addition, the Wolfram Knowledgebase and its associated entity framework allow hundreds of concrete “things” (e.g. people, cities, foods and planets) to be expressed, manipulated and computed with.

Despite a rapidly and ever-increasing number of domains known to the Wolfram Language, many knowledge domains still await computational representation. In his blog “Computational Knowledge and the Future of Pure Mathematics,” Stephen Wolfram presented a grand vision for the representation of abstract mathematics, known variously as the Computable Archive of Mathematics or Mathematics Heritage Project (MHP). The eventual goal of this project is no less than to render all of the approximately 100 million pages of peer-reviewed research mathematics published over the last several centuries into a computer-readable form.

In today’s blog, we give a glimpse into the future of that vision based on two projects involving the semantic representation of abstract mathematics. By way of further background and motivation for this work, we first briefly discuss an international workshop dedicated to the semantic representation of mathematical knowledge, which took place earlier this year. Next, we present our work on representing the abstract mathematical concepts of function spaces and topological spaces. Finally, we showcase some experimental work on representing the concepts and theorems of general topology in the Wolfram Language.

The Semantic Representation of Mathematical Knowledge Workshop

In February 2016, the Wolfram Foundation, together with the Fields Institute and the IMU/CEIC working group for the creation of a Global Digital Mathematics Library, organized a Semantic Representation of Mathematical Knowledge Workshop designed to pool the knowledge and experience of a small and select group of experts in order to produce agreement on a forward path toward the semantic encoding of all mathematics. This workshop was sponsored by the Alfred P. Sloan Foundation and held at the Fields Institute in Toronto. The workshop included approximately forty participants who met for three days of talks and discussions. Participants included specialists from various fields, including:

- computer algebra

- interactive and automatic proof systems

- mathematical knowledge representation

- foundations of mathematics

- practicing pure mathematics

Among the many accomplished and knowledgeable participants (a complete list of whom, together with the complete schedule of events, may be viewed on the workshop website), Georges Gonthier and Tom Hales shared their experience on the world’s largest extant formal proofs (the Feit–Thompson odd order theorem and the Kepler conjecture, respectively); Harvey Friedman, Dana Scott and Yuri Matiyasevich brought expertise on mathematical foundations, incompleteness and undecidability; Jeremy Avigad and John Harrison shared their knowledge and experience in designing and implementing two of the world’s most powerful theorem provers; Bruno Buchberger and Wieb Bosma contributed extensive knowledge on computational mathematics; Fields Medal winners Stanislav Smirnov and Manjul Bhargava expounded on the needs of practicing mathematicians; and Ingrid Daubechies and Stephen Wolfram shared their thoughts and knowledge on many technical and organizational challenges of the problem as a whole.

As one might imagine, the list of topics discussed at the workshop was quite extensive. In particular, it included type theory, the calculus of constructions, homotopy type theory, mathematical vernacular, partial functions and proof representations, together with many more. The following word cloud, compiled from the text of hundreds of publications by the workshop participants, gives a glimpse of the main topics:

Recordings of workshop presentations can be viewed on the workshop video archive, and a white paper discussing the workshop’s outcomes is also available. In addition, because of the often under-emphasized yet vital importance of the subject for the future development (and practice) of mathematics in the coming decades, 18 participants were interviewed on the technological and scientific needs for achieving such a project, culminating in a 90-minute video (excerpts also available in a 9-minute condensed version) that highlights the visions and thoughts of some of the world’s most important practitioners. We thank filmmaker Amy Young for volunteering her time and talents in the compilation and production of this unique glimpse into the thoughts of renowned mathematicians and computer scientists from around the world, which we sincerely hope other viewers will find as inspiring and enlightening as we do.

Computational Encoding of Function Spaces

The eCF project encoded continued fraction terminology, theorems, literature and identities in computational form, demonstrating that Wolfram|Alpha and the Wolfram Language provide a powerful framework for representing, exposing and manipulating mathematical knowledge.

While the theory of continued fractions contains both high-level and abstract mathematics, it represents only a tiny first step toward Stephen Wolfram’s grand vision for computational access to all of mathematics and the dynamic use of mathematical knowledge. Our next step down this challenging path therefore sought to encode within the Wolfram Language and Wolfram|Alpha entity-property framework a domain of more abstract and inhomogeneous mathematical objects having nontrivial properties and relations. The domain chosen for this next step was the important and fairly abstract branch of mathematics known as functional analysis.

That step posed a number of new challenges, among them the need for graduate-level mathematical knowledge in the domain of interest, formulation of entity names that “naturally” contain parameters and encode additional information (say, measure spaces) and the introduction of stub extensions to the Wolfram Language.

Work was carried out from December 2014–July 2016 and consisted of knowledge curation in three interconnected knowledge domains: "FunctionSpace", "TopologicalSpaceType" and "FunctionalAnalysisSource", together with the development of framework extensions to support them. This functionality was recently made available through the Wolfram Language entity framework and consists of the following content:

- 126 function spaces (many parametrized); 45 properties

- 39 topological space types; 14 properties

- 147 functional analysis sources; 49 properties

Full availability on the Wolfram|Alpha website is expected by early January 2017.

Function Spaces

Two underlying concepts in functional analysis are those of the function space and the topological space. A function space is a set of functions of a given kind from one set to another. Common examples of function spaces include Lp spaces (Lebesgue spaces; defined using a natural generalization of the p-norm for finite-dimensional vector spaces) and Ck spaces (consisting of functions whose derivatives exist and are continuous up to kth order).

As a simple first example in accessing this functionality, we can use RandomEntity to return a sample list of function spaces:

![RandomEntity["FunctionSpace", 5] RandomEntity["FunctionSpace", 5]](https://content.wolfram.com/sites/39/2016/12/1_InOut_PureMath2.png)

Similarly, EntityValue can be used to access curated properties for a given space:

![TextGrid[With[{props = {alternate names, associated people, Bessel inequality, Cauchy-Schwarz inequality, classes, classifications, dual space, inner product, isomorphic spaces, measure space, norm, related results, isomorphic spaces, measure space, norm, related results, relationship graph, timeline, triangle inequality, typeset description}}, Transpose[{props, EntityValue[Lebesgue space L2(Rn, dxn) function space, props]}]],Dividers -> All, Background -> {Automatic, {{LightBlue, None}}}, BaseStyle -> 8, ItemSize -> {{13, 76}, Automatic}] TextGrid[With[{props = {alternate names, associated people, Bessel inequality, Cauchy-Schwarz inequality, classes, classifications, dual space, inner product, isomorphic spaces, measure space, norm, related results, isomorphic spaces, measure space, norm, related results, relationship graph, timeline, triangle inequality, typeset description}}, Transpose[{props, EntityValue[Lebesgue space L2(Rn, dxn) function space, props]}]],Dividers -> All, Background -> {Automatic, {{LightBlue, None}}}, BaseStyle -> 8, ItemSize -> {{13, 76}, Automatic}]](https://content.wolfram.com/sites/39/2016/12/2_In_PureMath.png)

![]()

As can be seen in various properties in this table, some mathematical representations required the introduction of new symbols not (yet) present in the Wolfram Language. This was accomplished by introducing them into a special PureMath` context. For example, after evaluating the above table, the following “pure math extension symbols” appear:

For now, these constructs are just representational. However, they are not merely placeholders for mathematical concepts/computational structures, but also have the benefit of enhancing human readability by automatically adding traditional mathematical typesetting and annotations. This can be seen, for example, by comparing the raw semantic expressions in the table above with those displayed on the Wolfram|Alpha website:

In the longer term, many such concepts may be instantiated in the Wolfram Language itself. As a result, both this and any similar semantic projects to follow will help guide the inclusion and implementation of computational mathematical functionality within Mathematica and the Wolfram Language.

A slightly more involved example demonstrates how the entity framework can be used to construct programmatic queries. Here, we obtain a list of all curated function spaces associated with mathematician David Hilbert:

![EntityList[ Entity["FunctionSpace", EntityProperty["FunctionSpace", "AssociatedPeople"] -> ContainsAny[{Entity["Person", "DavidHilbert::8r974"]}]]] EntityList[ Entity["FunctionSpace", EntityProperty["FunctionSpace", "AssociatedPeople"] -> ContainsAny[{Entity["Person", "DavidHilbert::8r974"]}]]]](https://content.wolfram.com/sites/39/2016/12/4_InOut_PureMath.png)

One interesting property from the table above that warrants a bit more scrutiny is "RelationshipGraph". This consists of a hierarchical directed graph connecting all curated topological space types, where nodes A and B are connected by a directed edge A↦B if and only if “S is a topological space of type A” implies “S is a topological space of type B”, and with the additional constraint that all nodes are connected only via paths maximizing the number of intermediate nodes. For each function space, this graph also indicates (in red) topological space types to which a given space belongs. For example, the Lebesgue space L2 has the following relationship graph:

![EntityValue[Lebesgue space L2(Rn, dxn)(function space), relationship graph] EntityValue[Lebesgue space L2(Rn, dxn)(function space), relationship graph]](https://content.wolfram.com/sites/39/2016/12/5_InOut_PureMath.png)

Here we show a similar graph in a slightly more streamlined and schematic form:

This graph corresponds to the following topological space type memberships:

While portions of this graph appear in the literature, the above graph represents, to our knowledge, the most complete synthesis of the hierarchical structure of topological vector spaces available. (The preceding notwithstanding, it is important to keep in mind that the detailed structure depends on the detailed conventions adopted in the definitions of various topological spaces—conventions that are not uniform across the literature.) A number of interesting facts can be gleaned from the graph. In particular, it can immediately be seen that the well-known Hilbert and Banach spaces (which have high-level structural properties whose relaxations lead to more general spaces) fall at the top of the hierarchical heap together with “inner product space.” On the other hand, topological vector spaces are the “most generic” types in some heuristic sense.

During the curation process, we have taken great care that function space properties are correct for all parameter values. This can be illustrated using code like the following to generate a tab view of Lebesgue spaces for various values of its parameter p and noting how properties adjust accordingly:

One of the beautiful things about computational encoding (and part of the reason it is so desirable for mathematics as a whole) is that known results can be easily tested or verified. (Similarly, and maybe even more importantly, new propositions can be easily formulated and explored.) As an example, consider the duality of Lebesgue spaces Lp and Lq for 1/p+1/q=1 with p≥1. First, define a variable to represent the Lp entity:

![]()

Now, use the "DualSpace" property (which may be specified either as a string or via a fully qualified EntityProperty["FunctionSpace", "DualSpace"] object, the latter of which may be given directly in that form or the corresponding formatted form ![]() ) to obtain the dual entity:

) to obtain the dual entity:

![lq = EntityValue[lp, "DualSpace"] lq = EntityValue[lp, "DualSpace"]](https://content.wolfram.com/sites/39/2016/12/9_InOut_PureMath.png)

As can be seen, this formulation allows computation to be performed and expressed through the elegant paradigm of symbolic transformation of the entity canonical name. Taking the dual space of Lq in turn then gives:

![EntityValue[lq, "DualSpace"] EntityValue[lq, "DualSpace"]](https://content.wolfram.com/sites/39/2016/12/10_InOut_PureMath.png)

Finally, applying symbolic simplification to the entity canonical name:

This verifies we have obtained the same space we originally started with:

In other words, that the double dual (Lp)**, where * denotes the dual space, is equivalent to Lp. (Function spaces with this property are said to be reflexive.)

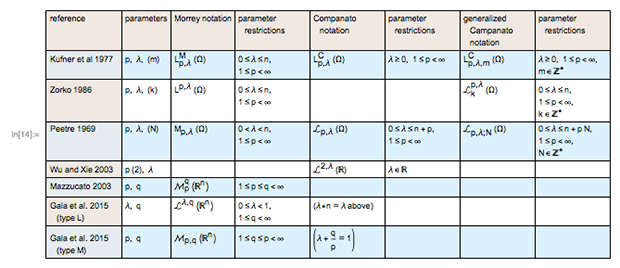

It is also important to emphasize that the curation of the existing literature on function spaces is not always straightforward, as illustrated in particular by the myriad of (mutually conflicting) conventions used for the interrelated collection of function spaces known as Campanato–Morrey spaces:

![cm = Cases[{#, Or @@ (StringMatchQ[ Cases[CanonicalName[#], _String, \[Infinity]], "*Campanato*" | "*Morrey*"])} & /@ EntityList["FunctionSpace"], {e_, True} :> e] cm = Cases[{#, Or @@ (StringMatchQ[ Cases[CanonicalName[#], _String, \[Infinity]], "*Campanato*" | "*Morrey*"])} & /@ EntityList["FunctionSpace"], {e_, True} :> e]](https://content.wolfram.com/sites/39/2016/12/13_InOut_PureMath.png)

This challenge is made clear with the following table, whose creation required a meticulous study of the literature:

As a result of multiple conventions, we chose in cases like this to include multiple, separate entities that are equivalent under appropriate (but possibly nontrivial) transformations of parameters and notations. For example:

![Grid[Transpose[ Function[r, {cm[[r]], EntityValue[cm[[r]], "TypesetDescription"]}]@{4, 5}], Dividers -> All, ItemSize -> {{13, 68}, Automatic}, BaseStyle -> 10] Grid[Transpose[ Function[r, {cm[[r]], EntityValue[cm[[r]], "TypesetDescription"]}]@{4, 5}], Dividers -> All, ItemSize -> {{13, 68}, Automatic}, BaseStyle -> 10]](https://content.wolfram.com/sites/39/2016/12/15_InOut_PureMath.png)

Topological Space Types

A topological space may be defined as a set of points and neighborhoods for each point satisfying a set of axioms relating the points and neighborhoods. The definition of a topological space relies only upon set theory and is the most general notion of a mathematical space that allows for the definition of concepts such as continuity, connectedness and convergence. Other spaces, such as manifolds and metric spaces, are specializations of topological spaces with extra structures or constraints. Common examples of topological vector spaces include the Banach space (a complete normed vector space) and the Hilbert space (an abstract Banach space possessing the structure of an inner product that allows length and angle to be measured). Topological spaces could be considered more abstract than function spaces (e.g. they are typically defined based on the existence of a norm as opposed to having a definite value for their norm). Being so general, topological spaces are a central unifying notion and appear in virtually every branch of modern mathematics. The branch of mathematics that studies topological spaces in their own right is called point-set topology or general topology.

EntityList can be used to see a complete list of curated topological space types:

![EntityList["TopologicalSpaceType"] EntityList["TopologicalSpaceType"]](https://content.wolfram.com/sites/39/2016/12/16_InOut_PureMath.png)

Similarly, EntityValue[space type, "PropertyAssociation"] returns all curated properties for a given space:

![TextGrid[List @@@ Normal[DeleteMissing[ EntityValue[Entity["TopologicalSpaceType", "HilbertSpace"], "PropertyAssociation"]]], Dividers -> All, Background -> {Automatic, {{LightBlue, None}}}, BaseStyle -> 8, ItemSize -> {{12, 77}, Automatic}] TextGrid[List @@@ Normal[DeleteMissing[ EntityValue[Entity["TopologicalSpaceType", "HilbertSpace"], "PropertyAssociation"]]], Dividers -> All, Background -> {Automatic, {{LightBlue, None}}}, BaseStyle -> 8, ItemSize -> {{12, 77}, Automatic}]](https://content.wolfram.com/sites/39/2016/12/17_InOut_PureMath.png)

![]()

While more could be said and done with topological space types, in this project this domain was primarily used as a convenient way to classify function spaces. However, as the second project to be discussed in this blog will show, additional exploratory work is currently being done that could result in the augmentation of the human- (but not computer-) readable descriptions of topological spaces with semantically encoded versions potentially even suitable for use with automated proof assistants or theorem provers.

Functional Analysis Sources

A final component added in this project was a set of cross-linked literature references that provide provenance and documentation for the various conventions (definitions etc.) adopted in our curated functional analysis datasets. These references can be searched based on the journal in which a paper appears, the year or decade it was published, the author or the language in which it was written:

- functional analysis papers in Crelle’s journal

- functional analysis papers from the 1940s

- functional analysis works by Grothendieck

- Besov’s 1959 functional analysis paper

- functional analysis papers in Italian

For mathematicians who wish to explore the source of the data down to the page (theorem etc.) level, this information has also been encoded:

![Entity["TopologicalSpaceType", "HilbertSpace"][ EntityProperty["TopologicalSpaceType", "References"]] Entity["TopologicalSpaceType", "HilbertSpace"][ EntityProperty["TopologicalSpaceType", "References"]]](https://content.wolfram.com/sites/39/2016/12/18__InOut_PureMath.png)

Finally, we can use this detailed reference information in a way that provides a convenient overview of both existing notational conventions and those we adopted in this project:

![Entity["FunctionSpace", {{"LebesgueL", {{"Reals", \[FormalN]}, \ {"LebesgueMeasure", \[FormalN]}}}, 2}][ EntityProperty["FunctionSpace", "TypesetNotationsTable"]] Entity["FunctionSpace", {{"LebesgueL", {{"Reals", \[FormalN]}, \ {"LebesgueMeasure", \[FormalN]}}}, 2}][ EntityProperty["FunctionSpace", "TypesetNotationsTable"]]](https://content.wolfram.com/sites/39/2016/12/19_PureMath.jpg)

Encoding of Concepts and Theorems from Topology

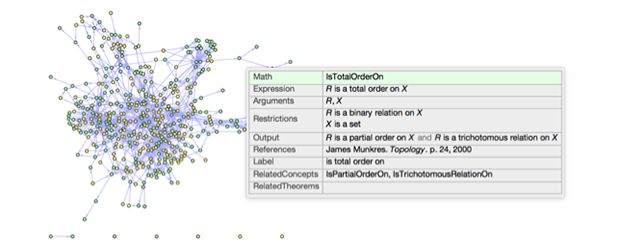

The second project we discuss in this blog is the not-unrelated augmentation of the Wolfram Language to precisely represent the definitions of mathematical concepts, statements and proofs in the field of point-set topology. This was done by creating an “entity store” for general topology consisting of concepts and theorems curated from the second edition of James Munkres’s popular Topology textbook. Although this project did not construct an explicit proof language (suitable, say, for use by a proof assistant or automated theorem prover), it did result in the comprehensive representation of 216 concepts and 225 theorems from a standard mathematical text, which is a prelude to any work involving machine proof.

EntityStore is a function introduced in Version 11 of the Wolfram Language that allows custom entity-property data to be packaged, placed in the cloud via the Wolfram Data Repository and then conveniently loaded and used. To load and use the general topology entity store, first access it via its ResourceData handle, then make it available in the Wolfram Language by prepending it to the list of known entity stores contained in the global $EntityStores variable:

![PrependTo[$EntityStores, ResourceData["General Topology EntityStore"]] PrependTo[$EntityStores, ResourceData["General Topology EntityStore"]]](https://content.wolfram.com/sites/39/2016/12/20__InOut_PureMath.png)

As can be seen in the output, a nice summary blob shows the contents of the registered stores (in this case, a list containing the single store we just registered), including the counts of entities and properties in each of its constituent domains. Now that the entity store is registered, the custom entities it contains can be used within the Wolfram Language entity framework just as if they were built in. For example:

![RandomEntity["GeneralTopologyTheorem", 5] RandomEntity["GeneralTopologyTheorem", 5]](https://content.wolfram.com/sites/39/2016/12/21__InOut_PureMath.png)

Similarly, we can see a full list of currently supported properties for topological theorems using EntityValue:

![EntityValue["GeneralTopologyTheorem", "Properties"] EntityValue["GeneralTopologyTheorem", "Properties"]](https://content.wolfram.com/sites/39/2016/12/22__InOut_PureMath.png)

Before proceeding, we perform a little context path manipulation to make output symbols format more concisely (slightly deferring a discussion of why we do this until the end of this section):

![]()

A nice summary table can now be generated to show basic information about a given theorem:

![Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"]["SummaryGrid"] Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"]["SummaryGrid"]](https://content.wolfram.com/sites/39/2016/12/24__InOut_PureMath.png)

"InputFormSummaryGrid" displays the same information as "SummaryGrid", but without applying the formatting rules we’ve used to make the concepts and theorems easily readable. It’s a good way to see the exact internal representation of the data associated with the entity. This can help us to understand what is going on when the formatting rules obscure this structure:

![Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"]["InputFormSummaryGrid"] Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"]["InputFormSummaryGrid"]](https://content.wolfram.com/sites/39/2016/12/25__InOut_PureMath.png)

While it’s pretty straightforward to understand the mathematical assertion being made here, let’s look at each property in detail. Here, for example, is the display name (“label”) used for the entity representing the above theorem in the entity store, formatted using InputForm to display quotes explicitly and thus emphasize that the label is a string:

![Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "Label"] // InputForm Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "Label"] // InputForm](https://content.wolfram.com/sites/39/2016/12/26__InOut_PureMath.png)

Similarly, here are alternate ways of referring to the theorem:

![Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "AlternateNames"] // InputForm Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "AlternateNames"] // InputForm](https://content.wolfram.com/sites/39/2016/12/27__InOut_PureMath.png)

… the universally quantified variables appearing at the top level of the theorem statement (i.e. these are the variables representing the objects that the theorem is “about”):

![Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"]["QualifyingObjects"] Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"]["QualifyingObjects"]](https://content.wolfram.com/sites/39/2016/12/28__InOut_PureMath.png)

… the conditions these objects must satisfy in order for the theorem to apply:

![Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "Restrictions"] // InputForm Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "Restrictions"] // InputForm](https://content.wolfram.com/sites/39/2016/12/29-_InOut_PureMath.png)

… and the conclusion of the theorem:

![Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "Statement"] // InputForm Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "Statement"] // InputForm](https://content.wolfram.com/sites/39/2016/12/30__InOut_PureMath.png)

Of course, we could have just as easily listed Math["IsHausdorff"][Χ] as a restriction to this theorem and Math["IsT1"][Χ] as the statement since the manner in which the hypotheses are split between "Restrictions" and "Statement" is not unique. However, while the details of the splitting are subject to style and readability, the mathematical content of the theorem as expressed through any of these subjective choices is equivalent.

Finally, we can retrieve metadata about the source from which the theorem was curated:

![Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "References"] // InputForm Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"][ "References"] // InputForm](https://content.wolfram.com/sites/39/2016/12/31__InOut_PureMath.png)

Now, backing up a bit, you may well wonder about expressions with structures such as Category[...] and Math[...] that you’ve seen above. Let’s take a look at one of them, but this time through a general topology concept instead of a theorem:

![Entity["GeneralTopologyConcept", "IsHausdorff"][ "RelatedTheorems"] Entity["GeneralTopologyConcept", "IsHausdorff"][ "RelatedTheorems"]](https://content.wolfram.com/sites/39/2016/12/32__InOut_PureMath.png)

![Entity["GeneralTopologyConcept", "IsHausdorff"]["SummaryGrid"] Entity["GeneralTopologyConcept", "IsHausdorff"]["SummaryGrid"]](https://content.wolfram.com/sites/39/2016/12/33_._InOut_PureMath.png)

Some of these properties are shared with corresponding properties for theorems:

![EntityValue["GeneralTopologyConcept", "Properties"] EntityValue["GeneralTopologyConcept", "Properties"]](https://content.wolfram.com/sites/39/2016/12/34__InOut_PureMath.png)

You can see the common properties by intersecting the full lists of supported properties for concepts and theorems:

![Intersection @@ (CanonicalName[ EntityValue[#, "Properties"]] & /@ {"GeneralTopologyConcept", "GeneralTopologyTheorem"}) Intersection @@ (CanonicalName[ EntityValue[#, "Properties"]] & /@ {"GeneralTopologyConcept", "GeneralTopologyTheorem"})](https://content.wolfram.com/sites/39/2016/12/35__InOut_PureMath.png)

While properties are similar across topology theorems and concepts, there are some differences that should be addressed. "Arguments" for a concept takes the role of "QualifyingObjects" for a theorem. Just as theorems are thought of as applying to certain objects, concepts are thought of as functions that can be applied to certain objects. The output can be a Boolean value, as in this case. We would call such a concept a property or a predicate. Other concepts represent mathematical structures. For example, Math["MetricTopology"] takes a metric space as an argument and outputs the corresponding topology induced by the metric. The entity that corresponds to this math concept is ![]() .

.

A "Restrictions" property for concepts is very similar to the corresponding property for theorems. And just as in the case with theorems, there’s nothing in principle stopping us from moving this condition from "Restrictions" and conjoining it to the output. The difference is that this can always be done for theorems, but it can only be done for concepts representing properties since the output is interpreted as having a truth value:

![Entity["GeneralTopologyConcept", "IsHausdorff"][ "Restrictions"] // InputForm Entity["GeneralTopologyConcept", "IsHausdorff"][ "Restrictions"] // InputForm](https://content.wolfram.com/sites/39/2016/12/36__InOut_PureMath.png)

Finally, the "Output" property here gives the value of the expression Math["IsHausdorff"][X]:

![Entity["GeneralTopologyConcept", "IsHausdorff"]["Output"] // InputForm Entity["GeneralTopologyConcept", "IsHausdorff"]["Output"] // InputForm](https://content.wolfram.com/sites/39/2016/12/37__InOut_PureMath.png)

When we use such an expression in a theorem or in the definition of another concept, we interpret it as equivalent to what we see in "Output". As we know, stating and understanding mathematics is much easier when we have such shorthands than if all theorems were stated in terms of atomic symbols and basic axioms.

Two of the most exciting properties on this list are "RelatedConcepts" and "RelatedTheorems". One of our goals is to represent mathematical concepts and theorems in a maximally computable way, and these are just an example of some of the computations we hope to do with these entities. A concept appears in "RelatedConcepts" if it is used in the "Restrictions", "Notation" or "Output" of a concept or the "Restrictions", "Notation" or "Statement" of a theorem. A theorem appears in the "RelatedTheorems" of a concept if that concept appears in the "RelatedConcepts" of that theorem. With this in mind, take a closer look at the examples above:

![Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"]["RelatedConcepts"] Entity["GeneralTopologyTheorem", "HausdorffImpliesT1"]["RelatedConcepts"]](https://content.wolfram.com/sites/39/2016/12/38__InOut_PureMath.png)

![Entity["GeneralTopologyConcept", "IsHausdorff"]["RelatedTheorems"] Entity["GeneralTopologyConcept", "IsHausdorff"]["RelatedTheorems"]](https://content.wolfram.com/sites/39/2016/12/39__InOut_PureMath.png)

It is important to emphasize that these relations were not curated, but rather computed, which is possible because of the precise, consistent and expressive language used to encode the concepts and theorems. As a matter of convenience, however, they’ve been precomputed for speed to allow you to, say, easily find the definition of concepts appearing in a theorem.

As an example of the power of this approach, we can use the Wolfram Language’s graph functionality to easily analyze the connectivity and structure of the network of topological theorems and concepts in our corpus:

![domains = {"GeneralTopologyConcept", "GeneralTopologyTheorem"}; nodes = Join @@ (EntityList /@ domains); labelednodes = Tooltip[Style[#, EntityTypeName[#] /. { "GeneralTopologyConcept" -> RGBColor[0.65, 1, 0.65], "GeneralTopologyTheorem" -> RGBColor[1, 1, 0.5] }], #["SummaryGrid"]] & /@ nodes; edges = Join @@ (Flatten[ Thread /@ Normal@EntityValue[#, "RelatedConcepts", "EntityAssociation"]] & /@ domains); domains = {"GeneralTopologyConcept", "GeneralTopologyTheorem"}; nodes = Join @@ (EntityList /@ domains); labelednodes = Tooltip[Style[#, EntityTypeName[#] /. { "GeneralTopologyConcept" -> RGBColor[0.65, 1, 0.65], "GeneralTopologyTheorem" -> RGBColor[1, 1, 0.5] }], #["SummaryGrid"]] & /@ nodes; edges = Join @@ (Flatten[ Thread /@ Normal@EntityValue[#, "RelatedConcepts", "EntityAssociation"]] & /@ domains);](https://content.wolfram.com/sites/39/2016/12/In40_PureMath.png)

As was the case for topological spaces, a number of extension symbols to the Wolfram Language were introduced in this project. We already encountered the Math and Theorem extensions, but there are also a number of others. For now, they have been placed in a GeneralTopology` context (analogous to the PureMath` context introduced for function spaces). This can be verified by examining the context of such symbols, e.g.:

![Context[Math] Context[Math]](https://content.wolfram.com/sites/39/2016/12/InOut45_PureMath.jpg)

The motivation behind appending GeneralTopology` to our context path is also now revealed, namely to suppress verbose context formatting in our outputs (so we will see things like Math instead of GeneralTopology`Math). Here is a complete listing of language extensions introduced in the GeneralTopology` context:

Again—as was the case for language extensions introduced for function spaces—some of these may eventually find their way into the Wolfram Language. However, independent of such considerations, these two small projects already show the need for some kind of infrastructure that allows incorporation, sharing and alignment of language extensions from different—and likely independently curated—domains.

We close with some experimental tidbits used to enhance the readability and usability of the concepts and theorems in our entity store. You have probably already noted the nice formatting in "SummaryGrid" and possibly even wondered how it was achieved. The answer is that it was produced using a set of MakeBoxes assignments packaged inside the entity store via the property EntityValue["GeneralTopologyTheorem", "TraditionalFormMakeBoxAssignments"]. Similarly, in order to provide usage messages for the GeneralTopology` symbols (which must be defined prior to having messages associated with them), we have packaged the messages in the special experimental EntityValue["GeneralTopologyTheorem", "Activate"] property, which can be activated as follows:

![]()

The result is the instantiation of standard Mathematica-style usage messages such as:

While the eventual implementation details of such features into a standard framework remains the subject of ongoing design and technical discussions, the ease with which it is possible to experiment with such functionality (and to implement semantic representation of mathematical structures in general) is a testament to the power and flexibility of the Wolfram Language as a development and prototyping tool.

Conclusion

These projects undertaken at Wolfram Research during the last year have explored the semantic representation of abstract mathematics. In order to facilitate experimentation with this functionality, we have posted two small notebooks to the cloud (function space entity domain and the topology entity store) that allow interactive exploration and evaluation without the need to install a local copy of Mathematica. We welcome your feedback, comments and even collaboration in these efforts to extend and push the limits of the mathematics that can be represented and computed.

As a final note, we would like to emphasize that significant portions of the work discussed here were carried out as a part of internship projects. If you know or are a motivated mathematics or computer science student who is interested in trying to break new ground in the semantic representation of mathematics, please consider 1) learning the Wolfram Language (which, since you are reading this, you may well have already) and 2) joining the Wolfram internship program next summer!

![With[{props = {"Norm", "TypesetDescription", "Classifications"}, lebesgue = Entity["FunctionSpace", {{"LebesgueL", {{"Reals", \[FormalN]}, \ {"LebesgueMeasure", \[FormalN]}}}, \[FormalP]}]}, TabView[Table[ space -> Grid[Transpose[{props, EntityValue[space, props]}], Dividers -> All, Alignment -> {Left, Center}], {space, {lebesgue, lebesgue /. \[FormalP] -> 1/3, lebesgue /. \[FormalP] -> 3, lebesgue /. \[FormalP] -> "Infinity"}}]]] With[{props = {"Norm", "TypesetDescription", "Classifications"}, lebesgue = Entity["FunctionSpace", {{"LebesgueL", {{"Reals", \[FormalN]}, \ {"LebesgueMeasure", \[FormalN]}}}, \[FormalP]}]}, TabView[Table[ space -> Grid[Transpose[{props, EntityValue[space, props]}], Dividers -> All, Alignment -> {Left, Center}], {space, {lebesgue, lebesgue /. \[FormalP] -> 1/3, lebesgue /. \[FormalP] -> 3, lebesgue /. \[FormalP] -> "Infinity"}}]]]](https://content.wolfram.com/sites/39/2016/12/7_PureMath.png)

Eric and Ian

This is great work and as a past Pure mathematician, something that I have long wanted WR to undertake. Is it also possible to get a copy of the notebook that was used to generate this post?

Best Regards

Michael

You can see and evaluate many of the examples using the cloud notebooks linked to near the bottom of the blog:

https://exploration.open.wolframcloud.com/objects/exploration/FunctionSpaces.nb

https://exploration.open.wolframcloud.com/objects/exploration/TopologyEntityStore.nb

This reminds me of my Real Analysis class: where I took to drawing Venn diagrams on butcher paper to keep track of the subordinations and properties. A case where you wished the number of Theorems outnumbered the number of Definitions:) It did help me to keep the structures in mind and I see no reason it couldn’t be mathematically usefull.

It is very rare when I write but I felt to write something after reading this article. …

I have a BS in Computational Math, however, I am not the very best mathematician. I consider myself maybe a below avg mathematician. I like to learn new things and apply knowledge to the real world.

I think that numerous schools like to teach the commercial aspect of mathematics which is all base on calculations. That is how I learn math with no understanding of concepts or theorems. When I went to college and took high level courses of math, I hit the wall pretty hard but my brain was wired differently and I could think in an abstract way. I had to force myself to start all over again and changed the way I understood mathematics. I was only seeing a piece of paper with a bunch of words and numbers that I had to memorize but it was not working anymore. It was not until I took Abstract Algebra when something click in my head and I was able to visualize sets and objects…etc. Now I use this knowledge at work to attack analytical problems and I have been very successful. Right now, I am self-taught. I buy a book and learn from it and I want my kids to do the same in any aspect of their lifes and professional career.

Now that I am homeschooling my kids, I teach them the concepts first and then how to apply it in the real world and they are getting it. It helps them to think in a step-by-step manner and for my surprise, they like to visualize everything in their mind too. IF they can visualize it, that means that they understand it.

Math is beautiful and perfect. My kids and currently doing ALEKS and they love it and do it on their own. We do not have to get a PhD in Math to get the benefits from it. Math helps to think differently and this is what matters to me.

IF you find a way to develop new theorems by using computers, I want to see it! Good luck.

Great article.

-tony