New in 13: Data Structures, Compilation & Parallelization

Two years ago we released Version 12.0 of the Wolfram Language. Here are the updates in data structures, compilation and parallelization since then. The contents of this post are compiled from Stephen Wolfram’s Release Announcements for 12.1, 12.2, 12.3 and 13.0.

Data Structures & Structured Data

A Computer Science Story: DataStructure (March 2020)

One of our major long-term projects is the creation of a full compiler for the Wolfram Language, targeting native machine code. Version 12.0 was the first exposure of this project. In Version 12.1 there’s now a spinoff from the project—which is actually a very important project in its own right: the new DataStructure function.

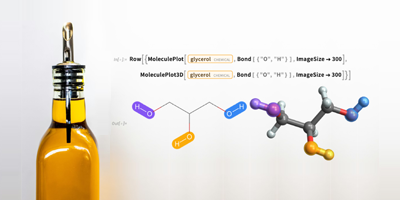

We’ve curated many kinds of things in the past: chemicals, equations, movies, foods, import-export formats, units, APIs, etc. And in each case we’ve made the things seamlessly computable as part of the Wolfram Language. Well, now we’re adding another category: data structures.

Think about all those data structures that get mentioned in textbooks, papers, libraries, etc. Our goal is to have all of them seamlessly usable directly in the Wolfram Language, and accessible in compiled code, etc. Of course it’s huge that we already have a universal “data structure”: the symbolic expressions in the Wolfram Language. And internal to the Wolfram Language we’ve always used all sorts of data structures, optimized for different purposes, and automatically selected by our algorithms and meta-algorithms.

But now with DataStructure there’s something new. If you have a particular kind of data structure you want to use, you can just ask for it by name, and use it.

Here’s how you create a linked list data structure:

✕

ds = CreateDataStructure["LinkedList"] |

Append a million random integers to the linked list (it takes 380 ms on my machine):

|

✕

Do[ds["Append", RandomInteger[]], 10^6] |

Now there’s immediate visualization of the structure:

✕

ds["Visualization"] |

Here’s the numerical mean of all the values:

✕

Mean[N[Normal[ds]]] |

Like so much of what we do DataStructure is set up to span from small scale and pedagogical to large scale and full industrial strength. Teaching a course about data structures? Now you can immediately use the Wolfram Language, storing everything you do in notebooks, automatically visualizing your data structures, etc. Building large-scale production code? Now you can have complete control over optimizing the data structures you’re using.

How does DataStructure work? Well, it’s all written in the Wolfram Language, and compiled using the compiler (which itself is also written in the Wolfram Language).

In Version 12.1 we’ve got most of the basic data structures covered, with various kinds of lists, arrays, sets, stacks, queues, hash tables, trees, and more. And here’s an important point: each one is documented with the running time for its various operations (“O(n)”, “O(n log(n))”, etc.), and the code ensures that that’s correct.

It’s pretty neat to see classic algorithms written directly for DataStructure.

Create a binary tree data structure (and visualize it):

✕

(ds = CreateDataStructure["BinaryTree",

3 -> {1 -> {0, Null}, Null}])["Visualization"]

|

Here’s a function for rebalancing the tree:

✕

RightRotate[y_] :=

Module[{x, tmp},

x = y["Left"]; tmp = x["Right"]; x["SetRight", y];

y["SetLeft", tmp]; x]

|

Now do it, and visualize the result:

✕

RightRotate[ds]["Visualization"] |

Tabular Data: Computing & Editing (March 2020)

Dataset has been a big success in the six years since it was first introduced in Version 10.0. Version 12.1 has the beginning of a major project to upgrade and extend the functionality of Dataset.

The first thing is something you might not notice, because now it “just works”. When you see a Dataset in a notebook, you’re just seeing its displayed form. But often there is lots of additional data that you’d get to by scrolling or drilling down. In Version 12.1 Dataset automatically stores that additional data directly in the notebook (at least up to a size specified by $NotebookInlineStorageLimit) so when you open the notebook again later, the Dataset is all there, and all ready to compute with.

In Version 12.1 a lot has been done with the formatting of Dataset. Something basic is that you can now say how many rows and columns to display by default:

✕

Dataset[CloudGet["https://wolfr.am/L9o1Pb7V"], MaxItems -> {4, 3}]

|

In Version 12.1 there are many options that allow detailed programmatic control over the appearance of a display Dataset. Here’s a simple example:

✕

Dataset[CloudGet["https://wolfr.am/L9o1Pb7V"],

MaxItems -> 5,

HeaderBackground -> LightGreen,

Background -> {{{LightBlue, LightOrange}}},

ItemDisplayFunction -> {"sex" -> (If[# ===

"female", \[Venus], \[Mars]] &)}

]

|

A major new feature is “right-click” interactivity (which works on rows, columns and individual items):

Dataset is a powerful construct for displaying and computing with tabular data of any depth. But sometimes you just want to enter or edit simple 2D tabular data—and the user interface requirements for this are rather different from those for Dataset. So in Version 12.1 we’re introducing a new experimental function called TableView, which is a user interface for entering—and viewing—purely two-dimensional tabular data:

✕

TableView[{{5, 6}, {7, 3}}]

|

Like a typical spreadsheet, TableView has fixed-width columns that you can manually adjust. It can efficiently handle large-scale data (think millions of items). The items can (by default) be either numbers or strings.

When you’ve finished editing a TableView, you can just ask for Normal and you’ll get lists of data out. (You can also feed it directly into a Dataset.) Like in a typical spreadsheet, TableView lets you put data wherever you want; if there’s a “hole”, it’ll show up as Null in lists you generate.

TableView is actually a dynamic control. So, for example, with TableView[Dynamic[x]] you can edit a TableView, and have its payload automatically be the value of some variable x. (And, yes, all of this is done efficiently, with minimal updates being made to the expression representing the value of x.)

Compilation & Parallelization

Advances in the Compiler: Portability and Librarying (May 2021)

We have a major long-term project of compiling Wolfram Language directly into efficient machine code. And as the project advances, it’s becoming possible to do more and more of our core development using the compiler. In addition, in things like the Wolfram Function Repository, more and more functions—or fragments of functions—are able to use the compiler.

In Version 12.3 we took an important step to make the workflow for this easier. Let’s say you compile a very simple function:

✕

fun = FunctionCompile[Function[Typed[x, "Integer64"], x + 1]] |

What is that output? Well, in Version 12.3 it’s a symbolic object that contains raw low-level code:

✕

fun[[1]]["CompiledIR"] |

But an important point is that everything is right there in this symbolic object. So you can just pick it up and use it:

✕

CompiledCodeFunction[

Association[

"Signature" -> TypeSpecifier[{"Integer64"} -> "Integer64"], "Input" ->

Compile`Program[{},

Function[

Typed[x, "Integer64"], x + 1]], "ErrorFunction" -> Automatic,

"InitializationName" ->

"Initialization_18398332_a4ff_43e1_bda5_14c193a2b3d6",

"ExpressionName" -> "Main_ExprInvocation", "CName" ->

"Main_CInvocation", "FunctionName" -> "Main", "SystemID" ->

"MacOSX-x86-64", "VersionData" -> {12.3, 0, 0}, "CompiledIR" ->

Association["MacOSX-x86-64" -> ByteArray[CompressedData["

1:eJydVwtwG9W5PqvXSra8Wj8gMpbEyq8qwZjVI7Ic2VTS2omcOEQ2bnHaMNIq

krETP2RZ9SukXT2IFDBUAZd60pTaJMP0decKEu4NHUrlxw0mONRxcokbUmOP

HZO2STCQFOaS0ntWklNIQmfandnz+Pd/ne/8/3/OKp0d9U4EALCSCcBcfE06

HIIc+J6FcxQAxELF59ZDAh/SnGKycPP2N5oe/fzYtvQ6ppaVU4oBaOQCkM5R

Ag6c3w1f1F9wwDzJqxHgmvJnkZC4iC/MVfCecjLSao7l2XTLBK7JMQilW9I0

zojVM6EsqMoKD+DmZzmuSeHLOQS1MUK8hWicIek61bqo+vHYjgPEiUmodz8B

AAl7TX5ERLie4GUXHAnBZjE0Es3Jqh0YSA9N1OITozKzKS5DVDlmvJkMgMER

nKdZFsaHhULLWzLEkq6uNt3j3ewnLeO4jmMTiFqaf7PlYP7mocffoRUuZf4D

Tx669OPiQkL4rS7edN1MTt3Ytexz2X8LnKmt0tao8IeefSmSVfyz4y9c+jE7

aCDOveIYPPBtwZb3IvcFnyCW7jJbhCX8YSWgnLklR5Sv4PcEgQvj1SJR0zq6

PG7bkvNbUCcF4DBcR15k/u/wQQmxXjXGjiQMz2aSg70x87372Pm9IEPIDchP

0orlkYx5s+IkLZuhFSdHMqYQxXmlbBIhwx5HuE+FMp5wT3PQ50G7hsJ6Eu1t

DnYL0X4TGpeN+eXLIzIwJp8byTg7ojgPBRnFmFk2OyJb/qo4t0eI9nyN+IuK

C7RibFSWMj0sH/Mrpm+KO7hmHvMqsvYL6PIPwA3AcE3nGSkSBemmNvIZ0Ah2

Bf3iOKchLxICz2dE8oEoBv5X4EQYF3eMZ+KUmF4dbeYRyPMZN+ZvsMsGVkYQ

Qkaxi6Tkk2nBCiG5SGJ/IiUXpwXLQHIFx5bAEGWIjVcMck3Hx43HzBuOB8un

qLwhbsUxszHErRzmOrCFOPaJDQML2IfTgj/bJFeApM0kWSCwyzbsk6+K+42h

oPFrxOskV0nJggNLmm6bxhZMkks3xWPmwheS2/RNMA+i7GYxmLBbFe6DMDJo

nyrcaYX4JCENe4hbtmNmVD5FK87HZfMjGXA3LyFy0CVEu4fCXiDs6Q3rbeH+

ZijI7ReinY6wd+gW8QtK2YWvEY/0e8J9QrRLdcfdZDJ4VeCxmD/Cmxfr8Xpw

iM3jGhiTabDPDjgsRC0ucAZs0ux93Jpi3skQTR8U+bXK3mLeeAgcxKuyOSKQ

Xfx4nGv8jG8cDuqFaC+DwmXribAnGt5jRXtmHWkQsivD6Ifs/qE9AO0nUe8w

2kWgbUDi9aCdiTFLTDLAsQP1DaPdJrQtisGWnULQGdQzjHYSaO+04CqD9uNo

d1Iw8fUojnoZdLNEmJll2jcwCBCdZejQx6Ovlj/+3wst3duxJ9/aoV0jdn3/

bfOpFem1Q4EYUfGOwPKH5vs7D77AGTm4UGE9wXnL8Urjye899mEUiHAWCFhi

gLDQYS3KhEUNzhkghBSGJ/7Fr08BjgmsPlFG+S1ZgwgHXJYHpHhqaUwGEhII

ztJE0vqLXB4CbKzWBJs1Hl1X9KIIZHGTtqBOzr6GDepOAGTsLEHLIo/semqS

y2EdwFfN/che5QAwXVJygECoX57rnUhYA0nHWFVv1B2AttO+7NVygYablEES

rSg6S9gMu6GKdB5b1zWKITw3szY6ICredIJHcTZC2sswHoZgrxUysokFWVMO

UhmhDKHxAQsM7F5YZzrFyWS8xmBasQUWB/k7I7Imkp9gC+ZNcSsnqcoBbuVU

7wCsS3uEaJ817PGg/R5udy/MhXDuVCZ2OYotkJJ2UrK4gq3YMC0+IZ9TymaV

8jFa0UTuN74eNE5T5ce4Fa8HK0Jc49RKTrcD3dOMljWHvbBl2HDzOGDood2f

8aHaHiZsF4Y7pTBZYA76S66uYFfnsetx7DLJdU6OyJZGZFOJ9gItO6mUTfhh

aRW8G6XKJ6nyoUR7fNwwSBkiXOPg+APQ6I2A8SzXGBsXv9kS9jSGvZ6gD6ak

I+yThn3D4dx3HpZcBZKPbdhSFFtcLS9aQiW/MAprrGIGZqhfcWFetrQg/zOi

aJLWGWLUhkOw2tgKUwtxhHt60d6hutprUQmLgGIXW+QvKeVzo/LvIwrWYaV8

hRY0PBE0TI0bY1QlBOTGWMUSZYzA6fjA/6Sx1aYH+gNzXIV6h/w7FnHschy7

CAsdWTA6Y1YsLayZXZDPQQesucZYsPJYMC80bhigyqfGK46Plk+OwzVWTFmP

dcLq4UA7IYCN4dz3TmCXHZIr88nyK7lISP5qw9pxbJFMlOV5wZ/msSUb9hlJ

LZxXslVlBUl4Tm88+4dxQ4gF7YFZasMUtSFCbYCrnqYenGqsgGUN1qUeK9pv

Rfeq4P0C9MHAVMPeq7ASVEScK/JqrESBf+DAgdNVw7jKVaHWWJ6JKg8b8NfB

c3k7wCMdrU1euo2gOto8La1uL6EpVWtKtaUk0dThJbbSO4ltDxONRK9BT6j0

uvudLb61hGor3UdoSggNqVGvfcTtItYTCQqcEur1G9TqDTqNljcq9pMUOWl1

BOrpnUd3d9UU3Nd1OK9I+vFre3Zqn0vf3fXyfc/9ZMuerrwiov43KVLmr7bs

LPj8xS2778srUoFfwJzJhWtJT/OqYCyi/Y2eIaoShlSMKp8eDyzLToxkXDKz

qbKslCf3+G7dogP7wAZDR7LEBpDkAxt2Lcb42cODjezecGdyXxs9h8YNxxDj

kWDlICwd4DSsCwL2rjVVcSRoPAQDFh5cwQoYvNFxNstpiKuCrec8qoQtIezd

rHAQKItgXyAQ4AGHoyJIHsR5R8V+fECaYxIGzJyMo7yx5jHQimM8DPJtrGsw

NXz6jWn8FJNzfe+Zvi/eXzZByner6pC6R3/CtnvvjSu3mnR/u843nDHrrHXv

lz29+LsMkLJ/9y32eWehfZIDChH6eSUekO/LiOwXMneN+UHy7Fnjqc2svvHq

XoByiVP2c8VKZYZGGQ+44iHD1g90EyKCH7NO2N8Hq/qL77Q+qH/7r7f4N6vw

yYgfCRFS6bcdA8W80RKK4BUI+HDh0R1vlvjJgyR47efGVhOPg7RmYbwsKL+v

E67u3INeIID2t333BLvAzyf5pn8s7UpBdx048NGZ/2I5P33QKXsSWM98k5bU

/czk5FgXs9dokNT5cGf/TJl4PQf8NDOiDH5vrU3E5+SqSQbncQ+nPXM6RAy5

hSHHxDCw4OKsvwwV4RuzBcNazmFXrJS/LldPcAJAJ2OrvQS+3UlfndJN/Krr

VwpQBwlmblz/qq9NrK/nXkr66uXsFxFPb9sxybvpH53wrybhH5rcH1EBqLZx

njBZRQMgx2FS5phzo+tZ3yfgnpmFgUwuV2gmV9YtzaYcjQ9bvqM3PX06pmzO

SuNpLuAGB/m2STyaxL73OzOtZPZpgbU6LSNbrAq2rZ2erA0xDUem3xs5/kvx

u3I9EfADr6N7Amw34eIJQpy2K70pdyBfqiYBxOWH4iO7g3NY2punqoRQx2/l

0v982/FHmpsvFTqKA5Z19ZPoHJzhQscsg59nwEaxWMAbZcTTce2+vIBY3JSL

FQ5ThDaTU39gLIZkCnlR5GiBYBMXhr+gFQ8h94jz8F04Hi9BXtloYHHIS8Rh

Tjw/8+97+ZuQkqyPPixLTH/QXpbabP+9nHrwVNWN9W2yrBkim1NiFdRGzvge

og4x6uqcjxY3Odgw/j9EKmZ0v9srOJFTN/H79/3sp0/ftXyWddfQk/lg5tq2

WepsKlY6iWQuazA8P4Rc5VnnGxn+S/zm+R0M+ld+kscN/7MeY68G3EGSu5pP

MNgegH1t6mRvTOljezYJo6lg1MI+m81n2FewOlJ8q99Bip99AkjyX4/lYSMl

cR8vBCCMJC8od6cwYunCEvgfgyRpbA2/a5UO+V+DdB1rk7X/JT1XID2Trfsg

ebVZpX+R8nE/SH5P0A1wzEn+jx4Eye+r/EWcpI//8SV+1m5Ziv7GLfQaTtLe

71P5s0rfzkn6P3eL/x0pPZ/cosfPSfoBUhgl/Ene0cCjcI9+ntwjHYthS3uL

r4VubemnfS0d7VUd7e6tdEt7a2t3W2kX7XKV9rT4mks7ut3eptaOntIWvc7X

7O3oSZ1w1b073R5WruYrWuxqg7bcoNVq7LSuqcmu07rVdqeLXm9X63aqy7W0

xql16VkzdqqmvbtjZ0IoMX/ES3s8bq+doltbE4TqXo/3HzwPuXvsq4dqtdfb

4e1y+2768LCvr9Xd4O7ybXL77DXtPvdjbi8U29l8k8NKt7ugJOV10z53ioM1

oFaXkvBwVpfpNbpyp0tfptXQBlKnc+nWl5HlGrerXKsp0xvKNDpa30Tq4blt

h8c29LTVfb+L9va0tD+y9eZZ/6+d9KU9rfbEQv8J4vY7Qm7/NzC33wp6rf02

2O13wN1+G/D225C33wK9/Y7Y228D3357BIIvPf8P0+Ch7Q==

"]]], "orcInstance" ->

140467786380800, "orcModuleId" -> 1, "targetMachineId" ->

140467786361856], 4886855856, 4886855712, 4886855744, 4886855680,

"{\"Integer64\"} -> \"Integer64\""][50]

|

There’s a slight catch, however. By default, FunctionCompile will generate raw low-level code for the type of computer on which you’re running. But if you take the resulting CompiledCodeFunction to another type of computer, it won’t be able to use the low-level code. (It keeps a copy of the original expression before compilation, so it can still run, but won’t have the efficiency advantage of compiled code.)

In Version 12.3, there’s a new option to FunctionCompile: TargetSystem. And with TargetSystem → All you can tell FunctionCompile to create cross-compiled low-level code for all current systems:

✕

fun = FunctionCompile[Function[Typed[x, "Integer64"], x + 1], TargetSystem -> All] |

Needless to say, it’s slower to do all that compilation. But the result is a portable object that contains low-level code for all current platforms:

✕

fun[[1]]["CompiledIR"] |

So if you have a notebook—or a Wolfram Function Repository entry—that contains this kind of CompiledCodeFunction you can send it to anyone, and it will automatically run on their system.

There are a few other subtleties to this. The low-level code created by default in FunctionCompile is actually LLVM IR (intermediate representation) code, not pure machine code. The LLVM IR is optimized for each particular platform, but when the code is loaded on the platform, there’s the small additional step of locally converting to actual machine code. You can use UseEmbeddedLibrary → True to avoid this step, and pre-create a complete library that includes your code.

This will make it slightly faster to load compiled code on your platform, but the catch is that creating a complete library can only be done on a specific platform. We’ve built a prototype of a cloud-based compilation-as-a-service system, but it’s not yet clear if the speed improvement is worth the trouble.

Another new compiler feature for Version 12.3 is that FunctionCompile can now take a list or association of functions that are compiled together, optimizing with all their interdependencies.

The compiler continues to get stronger and broader, with more and more functions and types (like "Integer128") being supported. And to support larger-scale compilation projects, something added in Version 12.3 is CompilerEnvironmentObject. This is a symbolic object that represents a whole collection of compiled resources (for example defined by FunctionDeclaration) that act like a library and can immediately be used to provide an environment for additional compilation that is being done.

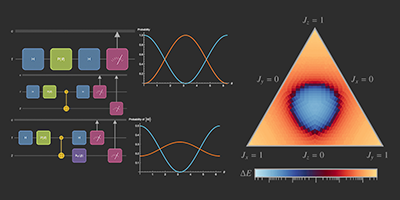

Distributed Computing & Its Management(May 2021)

We first introduced parallel computation in Wolfram Language in the mid-1990s, and in Version 7.0 (2008) we introduced functions like ParallelMap and Parallelize. It’s always been straightforward to set up parallel computation on multiple cores of a single computer. But it’s been more complicated when one also wants to use remote computers.

In Version 12.2 we introduced RemoteKernelObject as a symbolic representation of remote Wolfram Language capabilities. Starting in Version 12.2, this was available for one-shot evaluations with RemoteEvaluate. In Version 12.3 we’ve integrated RemoteKernelObject into parallel computation.

Let’s try this for one of my machines. Here’s a remote kernel object that represents a single kernel on it:

✕

RemoteKernelObject["threadripper2"] |

Now we can do a computation there, here just asking for the number of processor cores:

✕

RemoteEvaluate[%, $ProcessorCount] |

Now let’s create a remote kernel object that uses all 64 cores on this machine:

✕

RemoteKernelObject["threadripper2", "KernelCount" -> 64] |

Now I can launch those kernels (and, yes, it’s much faster and more robust than before):

✕

LaunchKernels[%] |

Now I can use this to do parallel computations:

✕

ParallelEvaluate[$ProcessID, %] |

For someone like me who is often involved in doing parallel computations, the streamlining of these capabilities in Version 12.3 will make a big difference.

One feature of functions like ParallelMap is that they’re basically just sending pieces of a computation independently to different processors. Things can get quite complicated when there needs to be communication between processors and everything is happening asynchronously.

The basic science of this is deeply related to the story of multiway graphs and to the origins of quantum mechanics in our Physics Project. But at a practical level of software engineering, it’s about race conditions, thread safety, locking, etc. And in Version 12.3 we’ve added some capabilities around this.

In particular, we’ve added the function WithLock that can lock files (or local objects) during a computation, thereby preventing interference between different processes which attempt to write to the file. WithLock provides a low-level mechanism for ensuring atomicity and thread safety of operations.

There’s a higher-level version of this in LocalSymbol. Say one sets a local symbol to 0:

✕

LocalSymbol["/tmp/counter"] = 0 |

Then launch 40 local parallel kernels (they need to be local so they share files):

|

✕

LaunchKernels[40]; |

Now, because of locking, the counter will be forced to update sequentially on each kernel:

✕

ParallelEvaluate[LocalSymbol["/tmp/counter"]++] |

✕

LocalSymbol["/tmp/counter"] |

Comments