In or out of school, the opportunities to learn and grow in your career are endless, and Wolfram is proud to bolster those with educational resources, from courses to textbooks. We are happy to share conversations with two authors whose books cover applications of Wolfram technology in astrophysics and geography, as well as highlight a few other recent book releases featuring Wolfram Language. Whether you’re building your summer reading list or prepping to wow interviewers, these titles are essential insights for real-world, computational STEM operations.

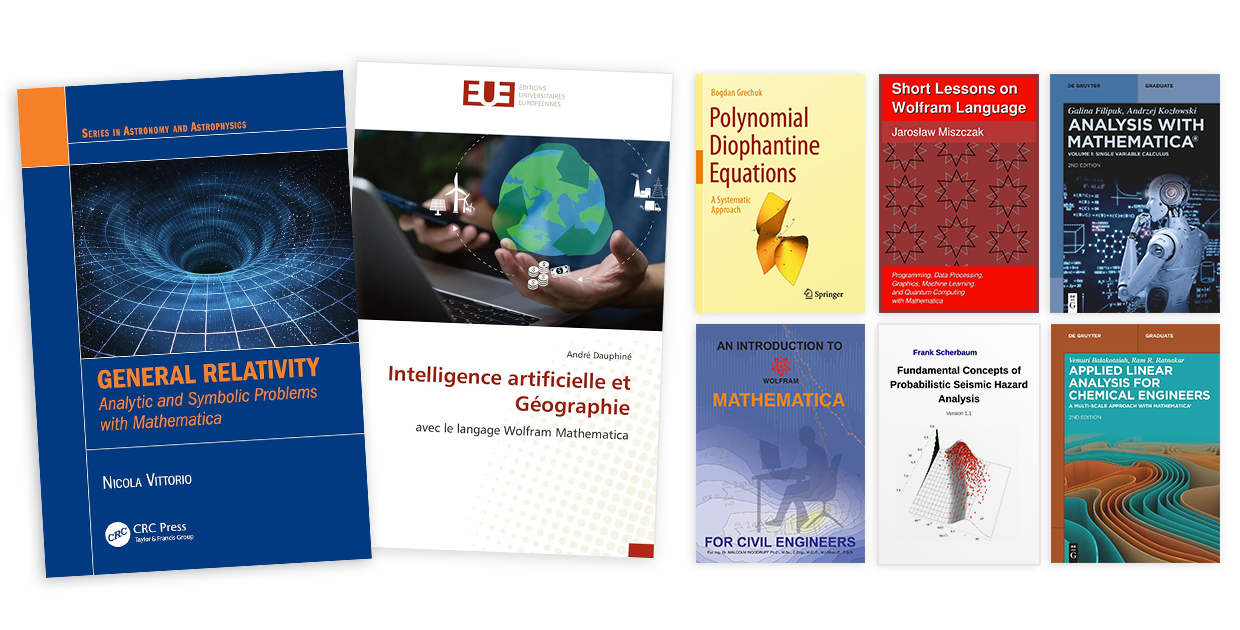

General Relativity: Analytic and Symbolic Problems with Mathematica

General Relativity: Analytic and Symbolic Problems with Mathematica was published by CRC Press in 2025. According to a review by Paolo Pani of the Sapienza University of Rome, the book “combines analytical rigor with the power of symbolic manipulation software to tackle a wide range of problems in Einstein’s theory of gravity. It offers a truly ‘hands-on’ approach to learning general relativity, guiding readers through both the conceptual and technical aspects of the subject while introducing advanced features of Wolfram Mathematica.” We discussed this new book with the author, Nicola Vittorio.

General Relativity: Analytic and Symbolic Problems with Mathematica was published by CRC Press in 2025. According to a review by Paolo Pani of the Sapienza University of Rome, the book “combines analytical rigor with the power of symbolic manipulation software to tackle a wide range of problems in Einstein’s theory of gravity. It offers a truly ‘hands-on’ approach to learning general relativity, guiding readers through both the conceptual and technical aspects of the subject while introducing advanced features of Wolfram Mathematica.” We discussed this new book with the author, Nicola Vittorio.

Could you tell us a bit about yourself?

I am an emeritus professor at the University of Rome Tor Vergata, where I taught relativity and cosmology for many years in the master’s program in physics at the physics department. My research focused on theoretical cosmology, particularly in making predictions about the formation and evolution of the universe’s large-scale structure. In this capacity, I served as a coinvestigator for the Planck mission of the European Space Agency. I have published 250 articles in refereed journals and authored the following textbooks: Cosmology (2020), An Overview of General Relativity and Space-Time (2022) and General Relativity: Analytic and Symbolic Problems with Mathematica (2025). Additionally, I have held the position of dean of the faculty of science at my university, served as president of the Association of Deans of the Italian Faculty of Sciences and been a member of the technical secretary of the Ministry for Education and Research.

Why did you decide to write this book?

In light of this experience, I decided to publish the Mathematica codes I developed over the years in a book. I have found that if students create their own version of the Mathematica notebooks, even by copying, they more quickly acquire the skills needed to effectively use the Mathematica software. However, for the convenience of both students and teachers, a significant fraction of these codes is available online.

What is a feature of your book that you’re most excited for your readers to experience?

I shared highly illustrative Mathematica notebooks with my class, which was quite diverse. The students came from various educational backgrounds—most had a bachelor’s in physics, while others had a bachelor’s in mathematics, and many were international students. Their interests varied; some were more focused on theoretical aspects, while others were interested in experimental results and astrophysical applications. Writing numerical codes helped students grasp the formal aspects of tensor calculus more easily. Additionally, being in a computer lab fostered collaboration among all students and created a strong team spirit within the class. Several Mathematica codes were developed to generate plots of different theoretical scenarios, such as the perihelion advance of Mercury or the shape of the horizons of a Kerr black hole. I found it particularly rewarding for students to create these plots themselves, rather than simply finding similar ones in a book.

Who do you want to see reading this book?

The book promotes a “learning by doing” approach. The lectures minimize abstract formalization and focus on problem solving, even for specific analytical derivations. This method effectively captures students’ attention and makes it easier to connect various problems and topics. Once the meaning and use of all necessary geometric objects are explained, Mathematica software, freely available to all students [at universities with site license access], is used to perform symbolic calculations. This approach has the advantage of bypassing lengthy and often tedious mathematical steps. For example, deriving Christoffel symbols or writing the Riemann tensor for different spacetime metrics is tackled quite easily by all the students in the class group. I found the results of this experiment quite encouraging. I am curious to see if this approach produces similar outcomes in other settings, which are characterized by different teaching methods and learning strategies. Therefore, feedback from instructors would be greatly appreciated.

What kind of general relativity problems does this book go over?

The book covers the traditional topics of general relativity and cosmology, which are usually part of a master’s-level physics course. The problems, both analytical and symbolic, include subjects like tensor calculus, metric geometry, covariant derivatives, geodesics and spacetime curvature. It also discusses the equivalence principle and the Einstein field equations. Furthermore, the book examines applications such as the classical tests of general relativity, linearized gravity with scalar and tensor modes, gravitational waves, Schwarzschild solutions, charged and rotating black holes, relativistic hydrodynamics and cosmology.

When were you first introduced to Wolfram Language and how has it changed your work?

A few years back, I mainly taught myself how to use it. My use of Mathematica grew a lot when I was working on the Cosmology book. The goal was to check long analytical calculations. I wouldn’t call myself an expert in Mathematica, but I have the basic skills necessary to get it to do what I need.

How do you see software like Mathematica being used for physics and astrophysics students?

As previously mentioned, the benefit lies in equipping students with numerical and symbolic tools that can be advantageous in other areas of their research and future careers. However, a word of caution is necessary with this approach. While students will become more efficient in the latter part of the course, they may initially encounter a “static friction” with programming, as not all students are familiar with it. Therefore, it is crucial to carefully balance the number of topics covered in the course with the numerical implementations of some of them, based on the average response of the class. Nonetheless, I have found that 48 hours of lectures and eight afternoons in a computer lab are more than adequate to cover most of the standard topics that define an introductory course in general relativity within a master’s program in physics.

Do you plan on writing any more books? And if so, what do you want them to focus on?

I will soon begin working on the second edition of my book Cosmology. In this second edition, I plan to use a similar approach by including Mathematica codes for solving different problems

Intelligence artificielle et Géographie avec le langage Wolfram Mathematica (Artificial Intelligence and Geography with Wolfram Mathematica)

Intelligence artificielle et Géographie avec le langage Wolfram Mathematica by André Dauphiné was published by Éditions Universitaires Européennes in 2025. According to the book’s description, “[t]his book aims to introduce geographers to artificial intelligence using machine learning models… The second part presents the Wolfram Mathematica programming language. How can machine learning models be created to answer geographical questions?” We discussed the new book with Dauphiné.

Intelligence artificielle et Géographie avec le langage Wolfram Mathematica by André Dauphiné was published by Éditions Universitaires Européennes in 2025. According to the book’s description, “[t]his book aims to introduce geographers to artificial intelligence using machine learning models… The second part presents the Wolfram Mathematica programming language. How can machine learning models be created to answer geographical questions?” We discussed the new book with Dauphiné.

Could you tell us a bit about yourself?

I am a retired professor from Côte d’Azur University, where I directed a CNRS-affiliated laboratory. For four years, I was the director of humanities and social sciences at the Ministry of Higher Education and Research in Paris.

In one sentence, how would you describe your book to the layman?

An introduction to artificial intelligence with original application exercises developed in Mathematica for geographers.

What is your goal with writing this book?

To show how, with very simple programs, artificial intelligence can address very different geographical questions (forecasting, recognition of territorial structures, etc.).

Who do you want to see reading this book?

This book is aimed at master’s students, then at young researchers and established geographers who want to discover machine learning.

What made you want to focus on Mathematica in your book?

I’ve been using Mathematica since Version 3. Besides its conciseness and user support, I appreciate the integration of so many modules into a single software program.

What do you think is the most exciting use of artificial intelligence using machine models in geography?

Artificial intelligence is a very powerful tool for forecasting in risk assessments, whether they concern climate events, transportation accidents in a city or even urban unrest. Thus, artificial intelligence is becoming an indispensable tool in land-use planning and environmental impact assessments.

How do you see artificial intelligence affecting geographers and their work?

The combination of artificial intelligence and big data allows us to address questions that enable us to derive laws from a very large amount of data. This should promote geographical studies on a global scale.

What is theoretical geography and how does machine learning relate to it?

Theoretical geography aims to understand territorial organizations at all time and space scales to find laws that apply to territories that are more or less close. For example, the center-periphery rule can be observed at the scale of a village, a state or the world. It is a multifractal law.

Do you see yourself writing another book in this same vein?

Not on this topic, but rather on multiscale geographical systems using recurrence, wavelet, entropy and fractal models…. Mathematica allows us to use all these forms of modeling.

What Else Is New?

Polynomial Diophantine Equations: A Systematic Approach

Bogdan Grechuk published Polynomial Diophantine Equations: A Systematic Approach with Springer in 2024. This book describes a new approach to the study of Diophantine equations. This new approach makes it widely accessible by those with high-school knowledge of mathematics. Springer describes the intended audience for the book as “undergraduate students, for whom the book will serve as an unusually rich introduction to the topic of Diophantine equations” while also stating that it will be useful for graduate students, PhD students and researchers as a source of “fascinating open questions of varying levels of difficulty.” This book earned a Featured Contributor Badge on Wolfram Community, where it was originally written in a Wolfram Notebook with many examples and solutions using Wolfram Language.

Bogdan Grechuk published Polynomial Diophantine Equations: A Systematic Approach with Springer in 2024. This book describes a new approach to the study of Diophantine equations. This new approach makes it widely accessible by those with high-school knowledge of mathematics. Springer describes the intended audience for the book as “undergraduate students, for whom the book will serve as an unusually rich introduction to the topic of Diophantine equations” while also stating that it will be useful for graduate students, PhD students and researchers as a source of “fascinating open questions of varying levels of difficulty.” This book earned a Featured Contributor Badge on Wolfram Community, where it was originally written in a Wolfram Notebook with many examples and solutions using Wolfram Language.

Short Lessons on Wolfram Language: Programming, Data Processing, Graphics, Machine Learning, and Quantum Computing with Mathematica

Jarosław Miszczak published Short Lessons on Wolfram Language: Programming, Data Processing, Graphics, Machine Learning, and Quantum Computing with Mathematica in 2024. This book’s main purpose is “to introduce the basic concepts required to start using Mathematica.” If you are a new user of Wolfram Language, this book is meant for you. Miszczak presents guides to using Wolfram Language across different disciplines and showcases how to use these tools in a brief overview to machine learning and quantum computing. An interactive excerpt from one of the book’s quantum lessons is available on Community, where it was awarded a Featured Contributor Badge.

Jarosław Miszczak published Short Lessons on Wolfram Language: Programming, Data Processing, Graphics, Machine Learning, and Quantum Computing with Mathematica in 2024. This book’s main purpose is “to introduce the basic concepts required to start using Mathematica.” If you are a new user of Wolfram Language, this book is meant for you. Miszczak presents guides to using Wolfram Language across different disciplines and showcases how to use these tools in a brief overview to machine learning and quantum computing. An interactive excerpt from one of the book’s quantum lessons is available on Community, where it was awarded a Featured Contributor Badge.

An Introduction to Wolfram Mathematica for Civil Engineers

Malcolm Woodruff’s An Introduction to Wolfram Mathematica for Civil Engineers offers a graceful introduction to Wolfram Language for engineers who may not have been exposed to it in their training and profession. Woodruff describes Wolfram as a “cost-effective tool that should be in every engineer’s toolbox.” The book starts with setting up industry standard units and symbols and ends on using native finite element modeling (FEM). You can get a free copy of the book on the Wolfram Notebook Archive.

Malcolm Woodruff’s An Introduction to Wolfram Mathematica for Civil Engineers offers a graceful introduction to Wolfram Language for engineers who may not have been exposed to it in their training and profession. Woodruff describes Wolfram as a “cost-effective tool that should be in every engineer’s toolbox.” The book starts with setting up industry standard units and symbols and ends on using native finite element modeling (FEM). You can get a free copy of the book on the Wolfram Notebook Archive.

Fundamental Concepts of Probabilistic Seismic Hazard Analysis

Frank Scherbaum’s Fundamental Concepts of Probabilistic Seismic Hazard Analysis earned him a Featured Contributor Badge on Community and is available for free on the Notebook Archive. The book was developed from Scherbaum’s professional and teaching experience in probabilistic seismic hazard analysis. Using Wolfram Language to illustrate real-world examples, he focuses on concepts, rather than procedures, to emphasize getting to know seismic hazard analysis on a deeper level.

Frank Scherbaum’s Fundamental Concepts of Probabilistic Seismic Hazard Analysis earned him a Featured Contributor Badge on Community and is available for free on the Notebook Archive. The book was developed from Scherbaum’s professional and teaching experience in probabilistic seismic hazard analysis. Using Wolfram Language to illustrate real-world examples, he focuses on concepts, rather than procedures, to emphasize getting to know seismic hazard analysis on a deeper level.

Analysis with Mathematica and Applied Linear Analysis for Chemical Engineers

Rounding out our coverage of new Wolfram Language books, we would like to point toward two titles we have covered in previous posts that have new second editions available. Check out Analysis with Mathematica by Galina Filipuk and Andrzej Kozłowski and Applied Linear Analysis for Chemical Engineers by Vemuri Balakotaiah and Ram R. Ratnakar.

|

|

If you’re interested in finding more books that use Wolfram Language, check out the full collection. If you’re working on a book about Mathematica or Wolfram Language, contact us to find out more about our options for author support and to have your book featured in an upcoming blog post!

]]>